- 重要な情報

- はじめに

- Datadog

- Datadog サイト

- DevSecOps

- AWS Lambda のサーバーレス

- エージェント

- インテグレーション

- コンテナ

- ダッシュボード

- アラート設定

- ログ管理

- トレーシング

- プロファイラー

- タグ

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Synthetic モニタリング

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Intelligent Test Runner

- Code Analysis

- Learning Center

- Support

- 用語集

- Standard Attributes

- ガイド

- インテグレーション

- エージェント

- OpenTelemetry

- 開発者

- 認可

- DogStatsD

- カスタムチェック

- インテグレーション

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- サービスのチェック

- IDE インテグレーション

- コミュニティ

- ガイド

- API

- モバイルアプリケーション

- CoScreen

- Cloudcraft

- アプリ内

- Service Management

- インフラストラクチャー

- アプリケーションパフォーマンス

- APM

- Continuous Profiler

- データベース モニタリング

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- Software Delivery

- CI Visibility (CI/CDの可視化)

- CD Visibility

- Test Visibility

- Intelligent Test Runner

- Code Analysis

- Quality Gates

- DORA Metrics

- セキュリティ

- セキュリティの概要

- Cloud SIEM

- クラウド セキュリティ マネジメント

- Application Security Management

- AI Observability

- ログ管理

- Observability Pipelines(観測データの制御)

- ログ管理

- 管理

Enable Data Jobs Monitoring for Spark on Google Cloud Dataproc

このページは日本語には対応しておりません。随時翻訳に取り組んでいます。翻訳に関してご質問やご意見ございましたら、お気軽にご連絡ください。

Data Jobs Monitoring gives visibility into the performance and reliability of Apache Spark applications on Google Cloud Dataproc.

Requirements

This guide is for Dataproc clusters on Compute Engine. If you are using Dataproc on GKE, refer to the Kubernetes Installation Guide instead.

Dataproc Release 2.0.x or later is required. All of Debian, Rocky Linux, and Ubuntu Dataproc standard images are supported.

Setup

Follow these steps to enable Data Jobs Monitoring for GCP Dataproc.

- Store your Datadog API key in GCP Secret Manager (recommended).

- Create and configure your Dataproc cluster.

- Specify service tagging per Spark application.

Store your Datadog API key in Google Cloud Secret Manager (recommended)

- Take note of your Datadog API key.

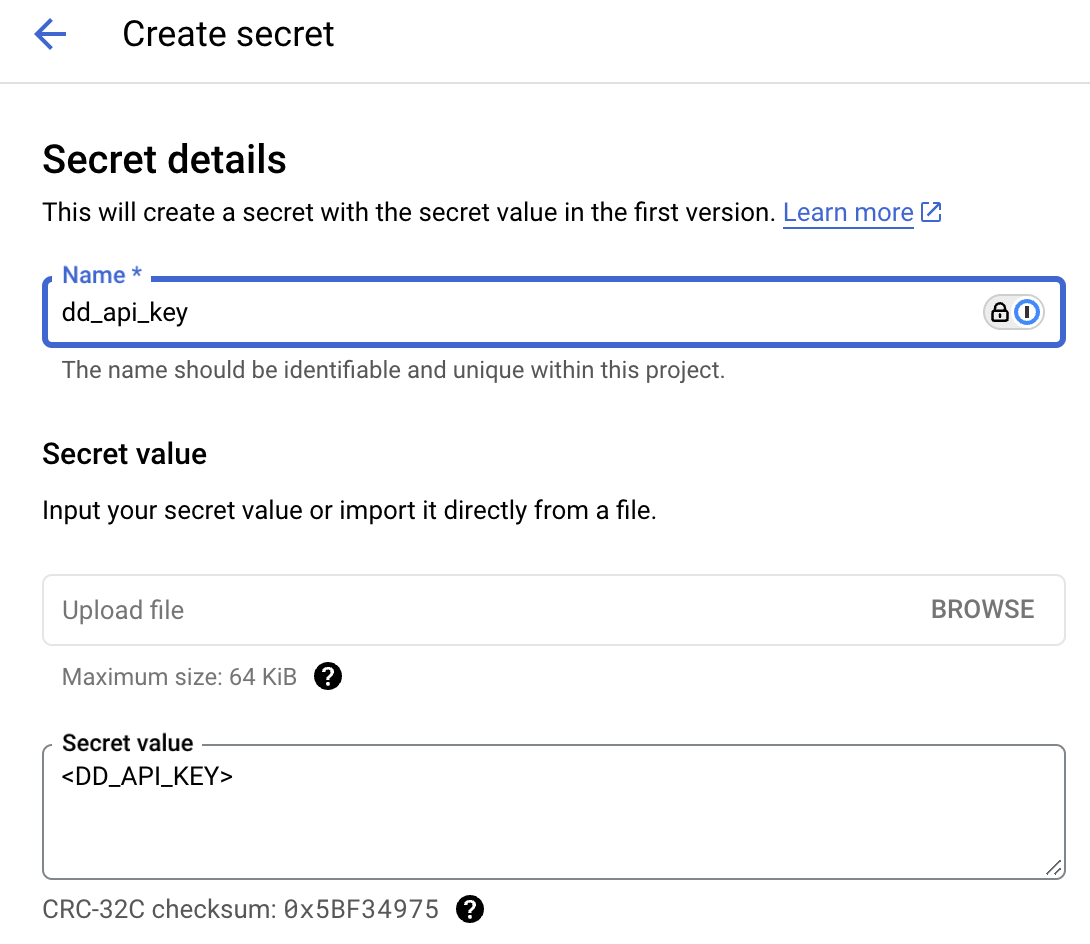

- In GCP Secret Manager, choose Create secret.

- Under Name, enter a Secret name. You can use

dd_api_key. - Under Secret value, paste your Datadog API key in the Secret value text box.

- Click Create Secret.

- Under Name, enter a Secret name. You can use

- Optionally, under Rotation, you can turn on automatic rotation.

- In GCP Secret Manager, open the secret you created. Take note of the Resource ID, which is in the format “projects/<PROJECT_NAME>/secrets/<SECRET_NAME>”.

- Make sure the service account used by your Dataproc cluster has permission to read the secret. By default, this is the

Compute Engine default service account. To grant access, copy the associated service account Principal, and click Grant Access on the Permissions tab of the secret’s page. Assign thesecretmanager.secretAccessorrole, or any other one that hassecretmanager.versions.accesspermission. See the IAM roles documentation for a full description of available roles.

Create and configure your Dataproc cluster

When you create a new Dataproc Cluster on Compute Engine in the Google Cloud Console, add an initialization action on the Customize cluster page:

Save the following script to a GCS bucket that your Dataproc cluster can read. Take note of the path to this script.

#!/bin/bash # Set required parameter DD_SITE DD_SITE=# Set required parameter DD_API_KEY with Datadog API key. # The commands below assumes the API key is stored in GCP Secret Manager, with the secret name as dd_api_key and the project <PROJECT_NAME>. # IMPORTANT: Modify if you choose to manage and retrieve your secret differently. # Change the project name, which you can find on the secrets page. The resource ID is in the format "projects/<PROJECT_NAME>/secrets/<SECRET_NAME>". PROJECT_NAME=<PROJECT_NAME> gcloud config set project $PROJECT_NAME SECRET_NAME=dd_api_key DD_API_KEY=$(gcloud secrets versions access latest --secret $SECRET_NAME) # Optional parameters # Uncomment the following line to allow adding init script logs when reporting a failure back to Datadog. A failure is reported when the init script fails to start the Datadog Agent successfully. # export DD_DJM_ADD_LOGS_TO_FAILURE_REPORT=true # Download and run the latest init script DD_SITE=$DD_SITE DD_API_KEY=$DD_API_KEY bash -c "$(curl -L https://dd-data-jobs-monitoring-setup.s3.amazonaws.com/scripts/dataproc/dataproc_init_latest.sh)" || trueThe script above sets the required parameters, and downloads and runs the latest init script for Data Jobs Monitoring in Dataproc. If you want to pin your script to a specific version, you can replace the file name in the URL with

dataproc_init_<version_tag>.sh, such asdataproc_init_1.5.0.shto use the specific version you want.On the Customize cluster page, locate the Initialization Actions section. Enter the path where you saved the script from the previous step.

When your cluster is created, this initialization action installs the Datadog Agent and downloads the Java tracer on each node of the cluster.

Specify service tagging per Spark application

Tagging enables you to better filter, aggregate, and compare your telemetry in Datadog. You can configure tags by passing -Ddd.service, -Ddd.env, -Ddd.version, and -Ddd.tags options to your Spark driver and executor extraJavaOptions properties.

In Datadog, each job’s name corresponds to the value you set for -Ddd.service.

spark-submit \

--conf spark.driver.extraJavaOptions="-Ddd.service=<JOB_NAME> -Ddd.env=<ENV> -Ddd.version=<VERSION> -Ddd.tags=<KEY_1>:<VALUE_1>,<KEY_2:VALUE_2>" \

--conf spark.executor.extraJavaOptions="-Ddd.service=<JOB_NAME> -Ddd.env=<ENV> -Ddd.version=<VERSION> -Ddd.tags=<KEY_1>:<VALUE_1>,<KEY_2:VALUE_2>" \

application.jar

Validation

In Datadog, view the Data Jobs Monitoring page to see a list of all your data processing jobs.

Advanced Configuration

Tag spans at runtime

You can set tags on Spark spans at runtime. These tags are applied only to spans that start after the tag is added.

// Add tag for all next Spark computations

sparkContext.setLocalProperty("spark.datadog.tags.key", "value")

spark.read.parquet(...)

To remove a runtime tag:

// Remove tag for all next Spark computations

sparkContext.setLocalProperty("spark.datadog.tags.key", null)

Further Reading

お役に立つドキュメント、リンクや記事: