- Essentials

- Getting Started

- Datadog

- Datadog Site

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Integrations

- Containers

- Dashboards

- Monitors

- Logs

- APM Tracing

- Profiler

- Tags

- API

- Software Catalog

- Session Replay

- Synthetic Monitoring and Testing

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- Software Delivery

- Code Security

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- OpenTelemetry

- Developers

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Monitor Template

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Service Checks

- IDE Plugins

- Community

- Guides

- Administrator's Guide

- API

- Datadog Mobile App

- CoScreen

- CoTerm

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Reference Tables

- Sheets

- Monitors and Alerting

- Infrastructure

- Metrics

- Watchdog

- Bits AI

- Software Catalog

- Error Tracking

- Change Tracking

- Service Management

- Actions & Remediations

- Infrastructure

- Universal Service Monitoring

- Containers

- Serverless

- Network Monitoring

- Cloud Cost

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Setting Up Postgres

- Setting Up MySQL

- Setting Up SQL Server

- Setting Up Oracle

- Setting Up Amazon DocumentDB

- Setting Up MongoDB

- Connecting DBM and Traces

- Data Collected

- Exploring Database Hosts

- Exploring Query Metrics

- Exploring Query Samples

- Exploring Recommendations

- Troubleshooting

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- Real User Monitoring

- Product Analytics

- Synthetic Testing and Monitoring

- Continuous Testing

- Software Delivery

- CI Visibility

- CD Visibility

- Test Optimization

- Quality Gates

- DORA Metrics

- Security

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- Code Security

- AI Observability

- Log Management

- Observability Pipelines

- Log Management

- Administration

AWS Lambda metrics

This page discusses metrics for monitoring serverless applications on AWS Lambda. There are 3 ways to get metrics from AWS Lambda:

- You can get Cloudwatch Lambda metrics from the Datadog AWS integration

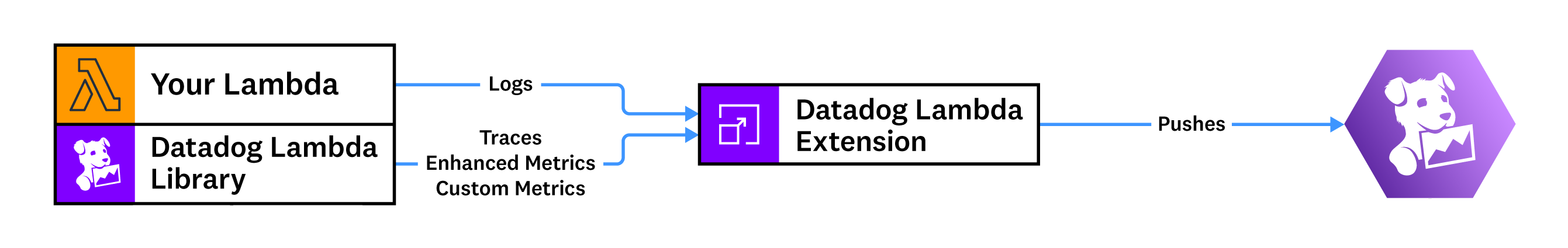

- You can get enhanced metrics by installing Serverless Monitoring for AWS Lambda through the Datadog Lambda Extension.

- You can submit custom metrics to Datadog from your Lambda functions.

Collect metrics from non-Lambda resources

Datadog can also help you collect metrics for AWS managed resources—such as API Gateway, AppSync, and SQS—to help you monitor your entire serverless application. These metrics are enriched with corresponding AWS resource tags.

To collect these metrics, set up the Datadog AWS integration.

Enhanced Lambda metrics

Datadog generates enhanced Lambda metrics from your Lambda runtime out-of-the-box with low latency, several second granularity, and detailed metadata for cold starts and custom tags.

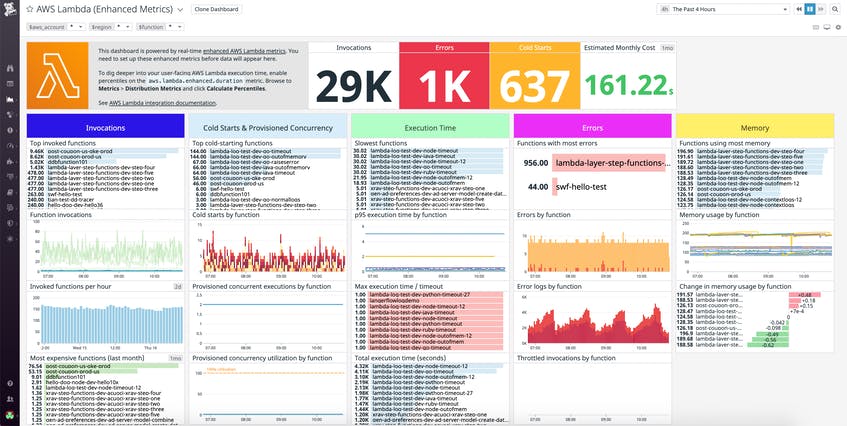

Enhanced Lambda metrics are in addition to the default Lambda metrics enabled with the AWS Lambda integration. Enhanced metrics are distinguished by being in the aws.lambda.enhanced.* namespace. You can view these metrics on the Enhanced Lambda Metrics default dashboard.

The following real-time enhanced Lambda metrics are available, and they are tagged with corresponding aws_account, region, functionname, cold_start, memorysize, executedversion, resource and runtime tags.

These metrics are distributions: you can query them using the count, min, max, sum, and avg aggregations. Enhanced metrics are enabled automatically with Serverless Monitoring but can be disabled by setting the DD_ENHANCED_METRICS environment variable to false on your Lambda function.

aws.lambda.enhanced.invocations- Measures the number of times a function is invoked in response to an event or an invocation of an API call.

aws.lambda.enhanced.errors- Measures the number of invocations that failed due to errors in the function.

aws.lambda.enhanced.max_memory_used- Measures the maximum amount of memory (mb) used by the function.

aws.lambda.enhanced.duration- Measures the elapsed seconds from when the function code starts executing as a result of an invocation to when it stops executing.

aws.lambda.enhanced.billed_duration- Measures the billed amount of time the function ran for (100ms increments).

aws.lambda.enhanced.init_duration- Measures the initialization time (second) of a function during a cold start.

aws.lambda.enhanced.runtime_duration- Measures the elapsed milliseconds from when the function code starts executing to when it returns the response back to the client, excluding the post-runtime duration added by Lambda extension executions.

aws.lambda.enhanced.post_runtime_duration- Measures the elapsed milliseconds from when the function code returns the response back to the client to when the function stops executing, representing the duration added by Lambda extension executions.

aws.lambda.enhanced.response_latency- Measures the elapsed time in milliseconds from when the invocation request is received to when the first byte of response is sent to the client.

aws.lambda.enhanced.response_duration- Measures the elapsed time in milliseconds from when the first byte of response to the last byte of response is sent to the client.

aws.lambda.enhanced.produced_bytes- Measures the number of bytes returned by a function.

aws.lambda.enhanced.estimated_cost- Measures the total estimated cost of the function invocation (US dollars).

aws.lambda.enhanced.timeouts- Measures the number of times a function times out.

aws.lambda.enhanced.out_of_memory- Measures the number of times a function runs out of memory.

aws.lambda.enhanced.cpu_total_utilization- Measures the total CPU utilization of the function as a number of cores.

aws.lambda.enhanced.cpu_total_utilization_pct- Measures the total CPU utilization of the function as a percent.

aws.lambda.enhanced.cpu_max_utilization- Measures the CPU utilization on the most utilized core.

aws.lambda.enhanced.cpu_min_utilization- Measures the CPU utilization on the least utilized core.

aws.lambda.enhanced.cpu_system_time- Measures the amount of time the CPU spent running in kernel mode.

aws.lambda.enhanced.cpu_user_time- Measures the amount of time the CPU spent running in user mode.

aws.lambda.enhanced.cpu_total_time- Measures the total amount of time the CPU spent running.

aws.lambda.enhanced.num_cores- Measures the number of cores available.

aws.lambda.enhanced.rx_bytes- Measures the bytes received by the function.

aws.lambda.enhanced.tx_bytes- Measures the bytes sent by the function.

aws.lambda.enhanced.total_network- Measures the bytes sent and received by the function.

aws.lambda.enhanced.tmp_max- Measures the total available space in the /tmp directory.

aws.lambda.enhanced.tmp_used- Measures the space used in the /tmp directory.

Submit custom metrics

Create custom metrics from logs or traces

If your Lambda functions are already sending trace or log data to Datadog, and the data you want to query is captured in an existing log or trace, you can generate custom metrics from logs and traces without re-deploying or making any changes to your application code.

With log-based metrics, you can record a count of logs that match a query or summarize a numeric value contained in a log, such as a request duration. Log-based metrics are a cost-efficient way to summarize log data from the entire ingest stream. Learn more about creating log-based metrics.

You can also generate metrics from all ingested spans, regardless of whether they are indexed by a retention filter. Learn more about creating span-based metrics.

Submit custom metrics directly from a Lambda function

All custom metrics are submitted as distributions.

Note: Distribution metrics must be submitted with a new name, do not re-use a name of a previously submitted metric.

Install Serverless Monitoring for AWS Lambda and ensure that you have installed the Datadog Lambda extension.

Choose your runtime:

from datadog_lambda.metric import lambda_metric

def lambda_handler(event, context):

lambda_metric(

"coffee_house.order_value", # Metric name

12.45, # Metric value

tags=['product:latte', 'order:online'] # Associated tags

)

Submit historical metrics with the Datadog Forwarder

In most cases, Datadog recommends that you use the Datadog Lambda extension to submit custom metrics. However, the Lambda extension can only submit metrics with a current timestamp.

To submit historical metrics, use the Datadog Forwarder. These metrics can have timestamps within the last one hour.

Start by installing Serverless Monitoring for AWS Lambda. Ensure that you have installed the Datadog Lambda Forwarder.

Then, choose your runtime:

from datadog_lambda.metric import lambda_metric

def lambda_handler(event, context):

lambda_metric(

"coffee_house.order_value", # Metric name

12.45, # Metric value

tags=['product:latte', 'order:online'] # Associated tags

)

# Submit a metric with a timestamp that is within the last 20 minutes

lambda_metric(

"coffee_house.order_value", # Metric name

12.45, # Metric value

timestamp=int(time.time()), # Unix epoch in seconds

tags=['product:latte', 'order:online'] # Associated tags

)

Submitting many data points

Using the Forwarder to submit many data points for the same metric and the same set of tags (for example, inside a big for-loop) may impact Lambda performance and CloudWatch cost.

You can aggregate the data points in your application to avoid the overhead.

For example, in Python:

def lambda_handler(event, context):

# Inefficient when event['Records'] contains many records

for record in event['Records']:

lambda_metric("record_count", 1)

# Improved implementation

record_count = 0

for record in event['Records']:

record_count += 1

lambda_metric("record_count", record_count)

Understanding distribution metrics

When Datadog receives multiple count or gauge metric points that share the same timestamp and set of tags, only the most recent one counts. This works for host-based applications because metric points get aggregated by the Datadog agent and tagged with a unique host tag.

A Lambda function may launch many concurrent execution environments when traffic increases. The function may submit count or gauge metric points that overwrite each other and cause undercounted results. To avoid this problem, custom metrics generated by Lambda functions are submitted as distributions because distribution metric points are aggregated on the Datadog backend, and every metric point counts.

Distributions provide avg, sum, max, min, count aggregations by default. On the Metric Summary page, you can enable percentile aggregations (p50, p75, p90, p95, p99) and also manage tags. To monitor a distribution for a gauge metric type, use avg for both the time and space aggregations. To monitor a distribution for a count metric type, use sum for both the time and space aggregations. Refer to the guide Query to the Graph for how time and space aggregations work.

Understanding your metrics usage, volume, and pricing in Datadog

Datadog provides granular information about the custom metrics you’re ingesting, the tag cardinality, and management tools for your custom metrics within the Metrics Summary page of the Datadog app. You can view all serverless custom metrics under the ‘Serverless’ tag in the Distribution Metric Origin facet panel. You can also control custom metrics volumes and costs with Metrics without Limits™.