- Essentials

- Getting Started

- Datadog

- Datadog Site

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Integrations

- Containers

- Dashboards

- Monitors

- Logs

- APM Tracing

- Profiler

- Tags

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Synthetic Monitoring

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Test Impact Analysis

- Code Analysis

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- OpenTelemetry

- Developers

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Service Checks

- IDE Plugins

- Community

- Guides

- Administrator's Guide

- API

- Datadog Mobile App

- CoScreen

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Sheets

- Monitors and Alerting

- Infrastructure

- Metrics

- Watchdog

- Bits AI

- Service Catalog

- API Catalog

- Error Tracking

- Service Management

- Infrastructure

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- Real User Monitoring

- Product Analytics

- Synthetic Testing and Monitoring

- Continuous Testing

- Software Delivery

- CI Visibility

- CD Visibility

- Test Optimization

- Code Analysis

- Quality Gates

- DORA Metrics

- Security

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- AI Observability

- Log Management

- Observability Pipelines

- Log Management

- Administration

Create a Log Pipeline

Overview

This page walks Technology Partners through creating a log pipeline. A log pipeline is required if your integration is sending in logs.

Log integrations

Use the Logs Ingestion HTTP endpoint to send logs to Datadog.

Development process

Guidelines

When creating a log pipeline, consider the following best practices:

- Map your data to Datadog’s standard attributes

- Centralizing logs from various technologies and applications can generate tens or hundreds of different attributes in a Log Management environment. Integrations must rely as much as possible on the standard naming convention.

- Set the

sourcetag to the integration name. - Datadog recommends that the

sourcetag is set to<integration_name>and that theservicetag is set to the name of the service that produces the telemetry. For example, theservicetag can be used to differentiate logs by product line.For cases where there aren’t different services, setserviceto the same value assource. Thesourceandservicetags must be non-editable by the user because the tags are used to enable integration pipelines and dashboards. The tags can be set in the payload or through the query parameter, for example,?ddsource=example&service=example.Thesourceandservicetags must be in lowercase. - The integration must support all Datadog sites.

- The user must be able to choose between the different Datadog sites whenever applicable. See Getting Started with Datadog Sites for more information about site differences.Your Datadog site endpoint is

http-intake.logs.. - Allow users to attach custom tags while setting up the integration.

- Datadog recommends that manual user tags are sent as key-value attributes in the JSON body. If it’s not possible to add manual tags to the logs, you can send the tags using the

ddtags=<TAGS>query parameter. See the Send Logs API documentation for examples. - Send data without arrays in the JSON body whenever possible.

- While it’s possible to send some data as tags, Datadog recommends sending data in the JSON body and avoiding arrays. This allows you more flexibility with the operations you can carry out on the data in Datadog Log Management.

- Do not log Datadog API keys.

- Datadog API keys can either be passed in the header or as part of the HTTP path. See Send Logs API documentation for examples. Datadog recommends using methods that do not log the API key in your setup.

- Do not use Datadog application keys.

- The Datadog application key is different from the API key and is not required to send logs using the HTTP endpoint.

Set up the log integration assets in your Datadog partner account

For information about becoming a Datadog Technology Partner, and gaining access to an integration development sandbox, read Build an Integration.

Log pipeline requirements

Logs sent to Datadog are processed in log pipelines to standardize them for easier search and analysis.

To set up a log pipeline:

- From the Pipelines page, click + New Pipeline.

- In the Filter field, enter a unique

sourcetag that defines the log source for the Technology Partner’s logs. For example,source:oktafor the Okta integration. Note: Make sure that logs sent through the integration are tagged with the correct source tags before they are sent to Datadog. - Optionally, add tags and a description.

- Click Create.

You can add processors within your pipelines to restructure your data and generate attributes.

Requirements:

- Use the date remapper to define the official timestamp for logs.

- Use a status remapper to remap the

statusof a log, or a category processor for statuses mapped to a range (as with HTTP status codes). - Use the attribute remapper to remap attribute keys to standard Datadog attributes. For example, an attribute key that contains the client IP must be remapped to

network.client.ipso Datadog can display Technology Partner logs in out-of-the-box dashboards. Remove original attributes when remapping by usingpreserveSource:falseto avoid duplicates. - Use the service remapper to remap the

serviceattribute or set it to the same value as thesourceattribute. - Use the grok processor to extract values in the logs for better searching and analytics. To maintain optimal performance, the grok parser must be specific. Avoid wildcard matches.

- Use the message remapper to define the official message of the log and make certain attributes searchable by full text.

For a list of all log processors, see Processors.

Tip: Take the free course Going Deeper with Logs Processing for an overview on writing processors and leveraging standard attributes.

Facet requirements

You can optionally create facets in the Log Explorer. Facets are specific attributes that can be used to filter and narrow down search results. While facets are not strictly necessary for filtering search results, they play a crucial role in helping users understand the available dimensions for refining their search.

Measures are a specific type of facet used for searches over a range. For example, adding a measure for latency duration allows users to search for all logs above a certain latency. Note: Define the unit of a measure facet based on what the attribute represents.

To add a facet or measure:

- Click on a log that contains the attribute you want to add a facet or measure for.

- In the log panel, click the Cog icon next to the attribute.

- Select Create facet/measure for @attribute.

- For a measure, to define the unit, click Advanced options. Select the unit based on what the attribute represents.

- Click Add.

To help navigate the facet list, facets are grouped together. For fields specific to the integration logs, create a single group with the same name as the source tag.

- In the log panel, click the Cog icon next to the attribute that you want in the new group.

- Select Edit facet/measure for @attribute. If there isn’t a facet for the attribute yet, select Create facet/measure for @attribute.

- Click Advanced options.

- In the Group field, enter the name of the group matching the source tag and a description of the new group, and select New group.

- Click Update.

Guidelines

- Before creating a new facet for an integration, review if the attribute should be remapped to a standard attribute instead. Facets for standard attributes are added automatically by Datadog when the log pipeline is published.

- Not all attributes are meant to be used as a facet. Non-faceted attributes are still searchable. The need for facets in integrations is focused on three things:

- Facets that are measures allow for associated units with an attribute. For example an attribute “response_time” could have a unit of “ms” or “s”.

- Facets provide a straightforward filtering interface for logs. Each facet is listed under the group heading and usable for filtering.

- Facets allow for attributes with low readability to be renamed with a label that is easier to understand. For example: @deviceCPUper → Device CPU Utilization Percentage

Requirements:

- Use standard attributes as much as possible.

- All facets that are not mapped to reserved or standard attributes should be namespaced with the integration name.

- A facet has a source. It can be

logfor attributes ortagfor tags. - A facet has a type (String, Boolean, Double or Integer) which matches the type of the attribute. If the type of the value of the attribute does not match the one of the facet, the attribute is not indexed with the facet.

- Double and Integer facets can have a unit. Units are composed of a family (such as time or bytes) and of a name (such as millisecond or gibibyte).

- A facet is stored in groups and has a description.

- If you remap an attribute and keep both, define a facet on a single one.

Review and deploy the integration

Datadog reviews the log integration based on the guidelines and requirements documented on this page and provides feedback to the Technology Partner through GitHub. In turn, the Technology Partner reviews and makes changes accordingly.

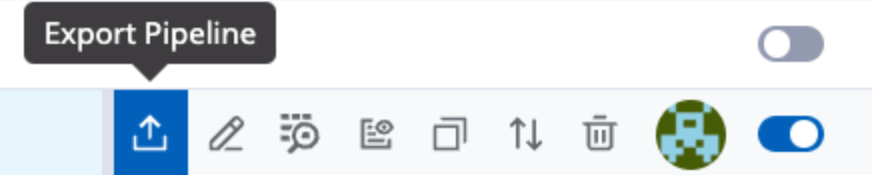

To start a review process, export your log pipeline and relevant custom facets using the Export icon on the Logs Configuration page.

Include sample raw logs with all the attributes you expect to be sent into Datadog by your integration. Raw logs comprise of the raw messages generated directly from the source before they have been ingested by Datadog.

Exporting your log pipeline includes two YAML files:

- One with the log pipeline, which includes custom facets, attribute remappers, and grok parsers.

- One with the raw example logs with an empty result.

Note: Depending on your browser, you may need to adjust your settings to allow file downloads.

After you’ve downloaded these files, navigate to your integration’s pull request on GitHub and add them in the Assets > Logs directory. If a Logs folder does not exist yet, you can create one.

Validations are run automatically in your pull request.

Three common validation errors are:

- The

idfield in both YAML files: Ensure that theidfield matches theapp_idfield in your integration’smanifest.jsonfile to connect your pipeline to your integration. - Not providing the result of running the raw logs you provided against your pipeline. If the resulting output from the validation is accurate, take that output and add it to the

resultfield in the YAML file containing the raw example logs. - If you send

serviceas a parameter, instead of sending it in the log payload, you must include theservicefield below your log samples within the yaml file.

Once validations pass, Datadog creates and deploys the new log integration assets. If you have any questions, add them as comments in your pull request. A Datadog team member will respond within 2-3 business days.

Further reading

Additional helpful documentation, links, and articles: