- Esenciales

- Empezando

- Datadog

- Sitio web de Datadog

- DevSecOps

- Serverless para Lambda AWS

- Agent

- Integraciones

- Contenedores

- Dashboards

- Monitores

- Logs

- Rastreo de APM

- Generador de perfiles

- Etiquetas (tags)

- API

- Catálogo de servicios

- Session Replay

- Continuous Testing

- Monitorización Synthetic

- Gestión de incidencias

- Monitorización de bases de datos

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Intelligent Test Runner

- Análisis de código

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Uso básico del Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Configuración remota

- Automatización de flotas

- Actualizar el Agent

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- OpenTelemetry

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un cuadro

- Crear un dashboard de integración

- Crear un monitor recomendado

- Crear una regla de detección Cloud SIEM

- OAuth para integraciones

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- API

- Aplicación móvil de Datadog

- CoScreen

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Hojas

- Monitores y alertas

- Infraestructura

- Métricas

- Watchdog

- Bits AI

- Catálogo de servicios

- Catálogo de APIs

- Error Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Gestión de eventos

- Gestión de casos

- Workflow Automation

- App Builder

- Infraestructura

- Universal Service Monitoring

- Contenedores

- Serverless

- Monitorización de red

- Coste de la nube

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Observabilidad del servicio

- Instrumentación dinámica

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Experiencia digital

- Real User Monitoring

- Monitorización del navegador

- Configuración

- Configuración avanzada

- Datos recopilados

- Monitorización del rendimiento de páginas

- Monitorización de signos vitales de rendimiento

- Monitorización del rendimiento de recursos

- Recopilación de errores del navegador

- Rastrear las acciones de los usuarios

- Señales de frustración

- Error Tracking

- Solucionar problemas

- Monitorización de móviles y TV

- Plataforma

- Session Replay

- Exploración de datos de RUM

- Feature Flag Tracking

- Error Tracking

- Guías

- Seguridad de los datos

- Monitorización del navegador

- Análisis de productos

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Entrega de software

- CI Visibility

- CD Visibility

- Test Visibility

- Configuración

- Tests en contenedores

- Búsqueda y gestión

- Explorador

- Monitores

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Detección temprana de defectos

- Reintentos automáticos de tests

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Intelligent Test Runner

- Code Analysis

- Quality Gates

- Métricas de DORA

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Sensitive Data Scanner

- Ayuda

Trace An LLM Application

This page is not yet available in Spanish. We are working on its translation.

If you have any questions or feedback about our current translation project, feel free to reach out to us!

If you have any questions or feedback about our current translation project, feel free to reach out to us!

LLM Observability is not available in the selected site () at this time.

Overview

This guide uses the LLM Observability SDK for Python. If your application is written in another language, you can create traces by calling the API instead.

Setup

Jupyter notebooks

To better understand LLM Observability terms and concepts, you can explore the examples in the LLM Observability Jupyter Notebooks repository. These notebooks provide a hands-on experience, and allow you to apply these concepts in real time.

Command line

To generate an LLM Observability trace, you can run a Python script.

Prerequisites

- LLM Observability requires a Datadog API key. For more information, see the instructions for creating an API key.

- The following example script uses OpenAI, but you can modify it to use a different provider. To run the script as written, you need:

- An OpenAI API key stored in your environment as

OPENAI_API_KEY. To create one, see Account Setup and Set up your API key in the official OpenAI documentation. - The OpenAI Python library installed. See Setting up Python in the official OpenAI documentation for instructions.

- An OpenAI API key stored in your environment as

Install the SDK by adding the

ddtraceandopenaipackages:pip install ddtrace pip install openaiCreate a Python script and save it as

quickstart.py. This Python script makes a single OpenAI call.quickstart.py

import os from openai import OpenAI oai_client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY")) completion = oai_client.chat.completions.create( model="gpt-3.5-turbo", messages=[ {"role": "system", "content": "You are a helpful customer assistant for a furniture store."}, {"role": "user", "content": "I'd like to buy a chair for my living room."}, ], )Run the Python script with the following shell command. This sends a trace of the OpenAI call to Datadog.

DD_LLMOBS_ENABLED=1 DD_LLMOBS_ML_APP=onboarding-quickstart \ DD_API_KEY=<YOUR_DATADOG_API_KEY> DD_SITE= \ DD_LLMOBS_AGENTLESS_ENABLED=1 ddtrace-run python quickstart.pyFor more information about required environment variables, see the SDK documentation.

Note:

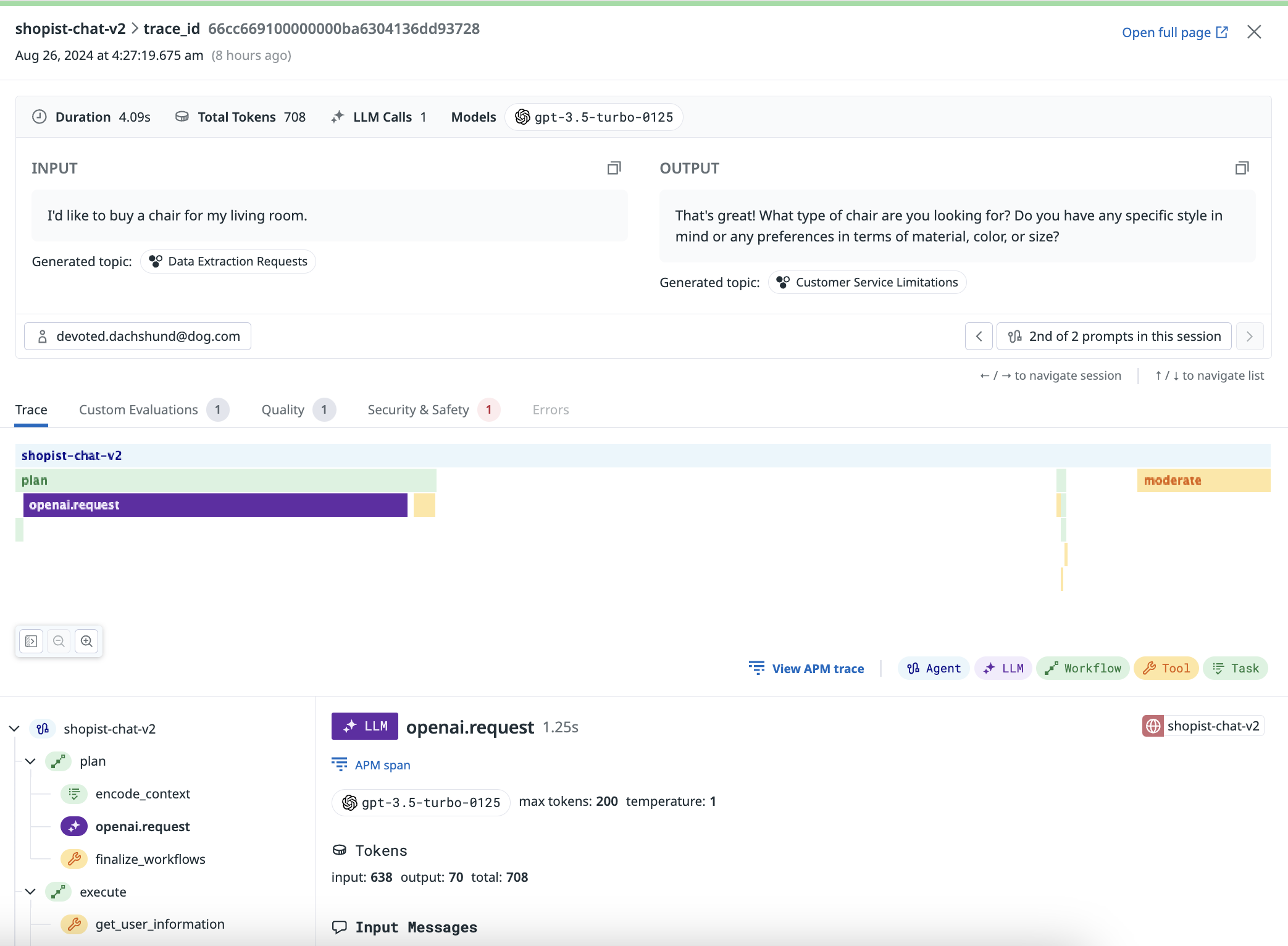

DD_LLMOBS_AGENTLESS_ENABLEDis only required if you do not have the Datadog Agent running. If the Agent is running in your production environment, make sure this environment variable is unset.View the trace of your LLM call on the Traces tab of the LLM Observability page in Datadog.

The trace you see is composed of a single LLM span. The ddtrace-run command automatically traces your LLM calls from Datadog’s list of supported integrations.

If your application consists of more elaborate prompting or complex chains or workflows involving LLMs, you can trace it using the Setup documentation and the SDK documentation.

Further Reading

Más enlaces, artículos y documentación útiles: