- Principales informations

- Getting Started

- Datadog

- Site Datadog

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Intégrations

- Conteneurs

- Dashboards

- Monitors

- Logs

- Tracing

- Profileur

- Tags

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Surveillance Synthetic

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Intelligent Test Runner

- Code Analysis

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- OpenTelemetry

- Développeurs

- Authorization

- DogStatsD

- Checks custom

- Intégrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Checks de service

- IDE Plugins

- Communauté

- Guides

- API

- Application mobile

- CoScreen

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Alertes

- Infrastructure

- Métriques

- Watchdog

- Bits AI

- Service Catalog

- API Catalog

- Error Tracking

- Service Management

- Infrastructure

- Universal Service Monitoring

- Conteneurs

- Sans serveur

- Surveillance réseau

- Cloud Cost

- Application Performance

- APM

- Profileur en continu

- Database Monitoring

- Agent Integration Overhead

- Setup Architectures

- Configuration de Postgres

- Configuration de MySQL

- Configuration de SQL Server

- Setting Up Oracle

- Setting Up MongoDB

- Connecting DBM and Traces

- Données collectées

- Exploring Database Hosts

- Explorer les métriques de requête

- Explorer des échantillons de requêtes

- Dépannage

- Guides

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- RUM et Session Replay

- Product Analytics

- Surveillance Synthetic

- Continuous Testing

- Software Delivery

- CI Visibility

- CD Visibility

- Test Visibility

- Exécuteur de tests intelligent

- Code Analysis

- Quality Gates

- DORA Metrics

- Securité

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- AI Observability

- Log Management

- Pipelines d'observabilité

- Log Management

- Administration

Forwarding Logs to Custom Destinations

Cette page n'est pas encore disponible en français, sa traduction est en cours.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Log forwarding is not available for the Government site. Contact your account representative for more information.

Overview

Log Forwarding allows you to send logs from Datadog to custom destinations like Splunk, Elasticsearch, and HTTP endpoints. This means that you can use Log Pipelines to centrally collect, process, and standardize your logs in Datadog. Then, send the logs from Datadog to other tools to support individual teams’ workflows. You can choose to forward any of the ingested logs, whether or not they are indexed, to custom destinations. Logs are forwarded in JSON format and compressed with GZIP.

Note: Only Datadog users with the logs_write_forwarding_rules permission can create, edit, and delete custom destinations for forwarding logs.

If a forwarding attempt fails (for example: if your destination temporarily becomes unavailable), Datadog retries periodically for 2 hours using an exponential backoff strategy. The first attempt is made following a 1-minute delay. For subsequent retries, the delay increases progressively to a maximum of 8-12 minutes (10 minutes with 20% variance).

The following metrics report on logs that have been forwarded successfully, including logs that were sent successfully after retries, as well as logs that were dropped.

- datadog.forwarding.logs.bytes

- datadog.forwarding.logs.count

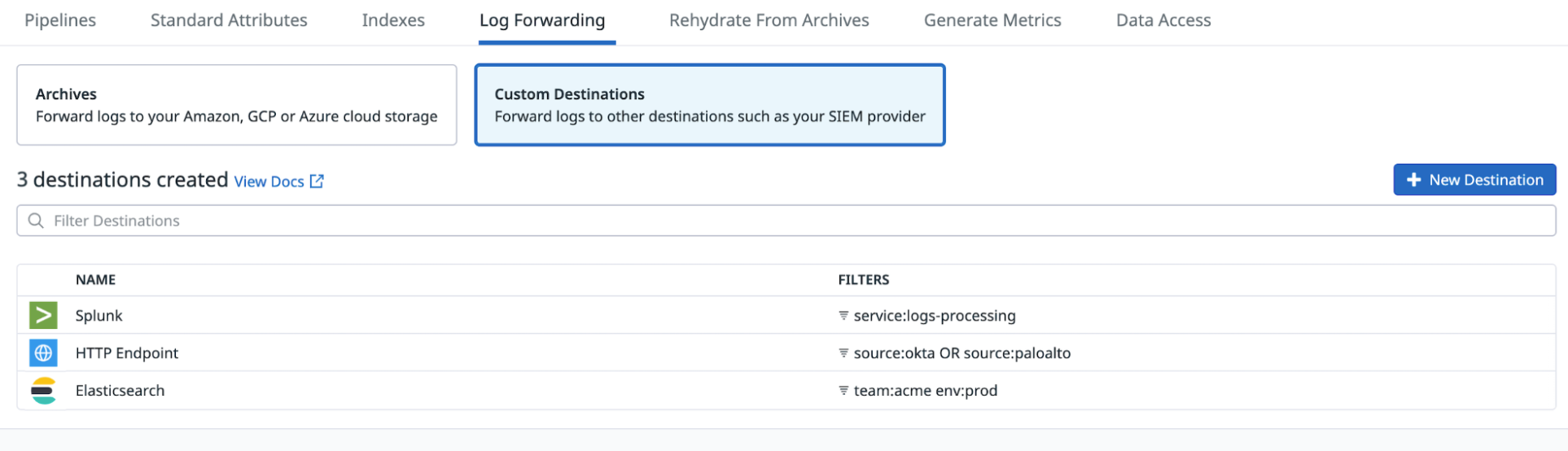

Set up log forwarding to custom destinations

- Add webhook IPs from the IP ranges list to the allowlist.

- Navigate to Log Forwarding.

- Select Custom Destinations.

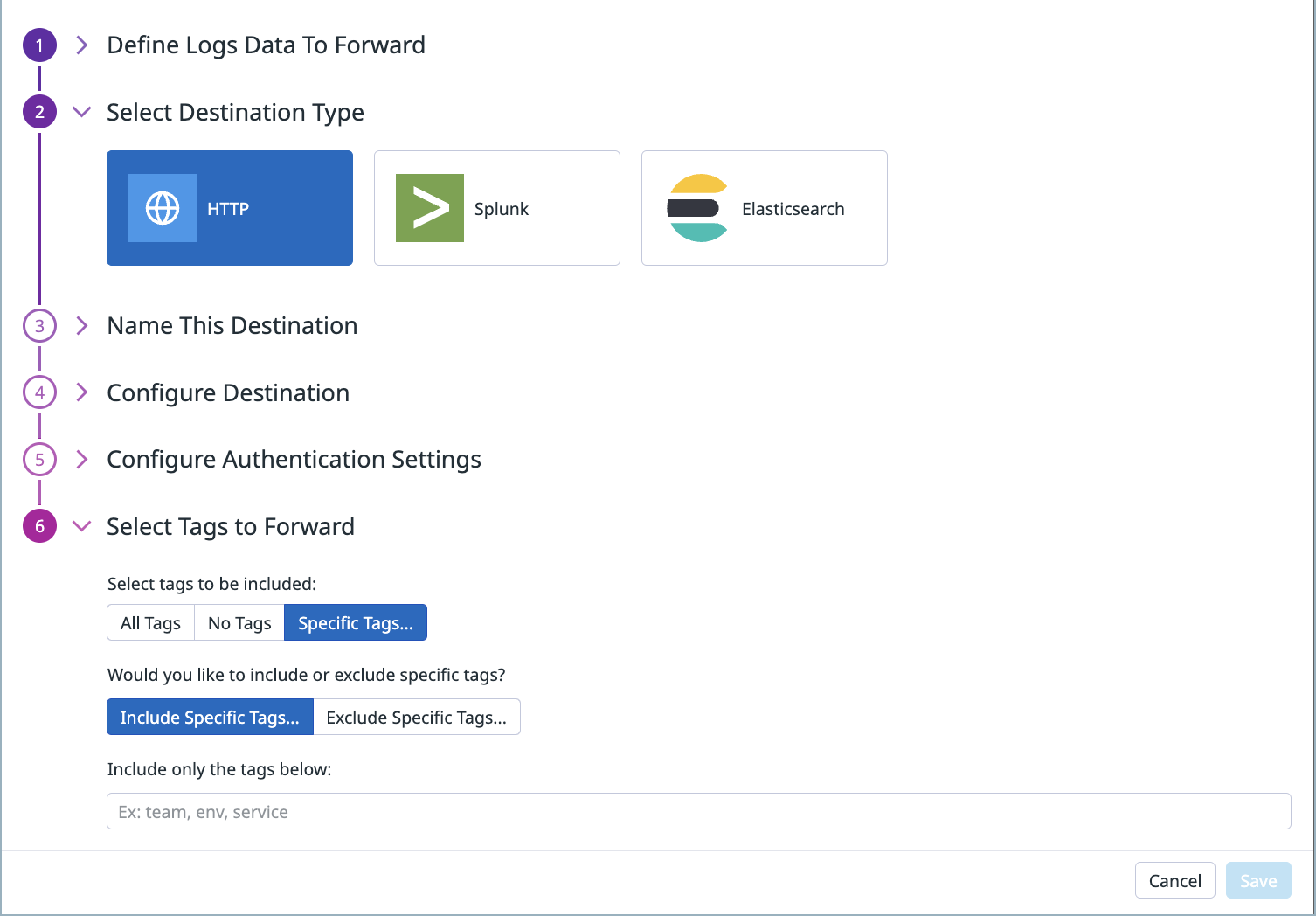

- Click New Destination.

- Enter the query to filter your logs for forwarding. See Search Syntax for more information.

- Select the Destination Type.

- Enter a name for the destination.

- In the Define endpoint field, enter the endpoint to which you want to send the logs. The endpoint must start with

https://.- For example, if you want to send logs to Sumo Logic, follow their Configure HTTP Source for Logs and Metrics documentation to get the HTTP Source Address URL to send data to their collector. Enter the HTTP Source Address URL in the Define endpoint field.

- In the Configure Authentication section, select one of the following authentication types and provide the relevant details:

- Basic Authentication: Provide the username and password for the account to which you want to send logs.

- Request Header: Provide the header name and value. For example, if you use the Authorization header and the username for the account to which you want to send logs is

myaccountand the password ismypassword:- Enter

Authorizationfor the Header Name. - The header value is in the format of

Basic username:password, whereusername:passwordis encoded in base64. For this example, the header value isBasic bXlhY2NvdW50Om15cGFzc3dvcmQ=.

- Enter

- Enter a name for the destination.

- In the Configure Destination section, enter the endpoint to which you want to send the logs. The endpoint must start with

https://. For example, enterhttps://<your_account>.splunkcloud.com:8088.

Note:/services/collector/eventis automatically appended to the endpoint. - In the Configure Authentication section, enter the Splunk HEC token. See Set up and use HTTP Event Collector for more information about the Splunk HEC token.

Note: The indexer acknowledgment needs to be disabled.

- Enter a name for the destination.

- In the Configure Destination section, enter the following details:

a. The endpoint to which you want to send the logs. The endpoint must start withhttps://. An example endpoint for Elasticsearch:https://<your_account>.us-central1.gcp.cloud.es.io.

b. The name of the destination index where you want to send the logs.

c. Optionally, select the index rotation for how often you want to create a new index:No Rotation,Every Hour,Every Day,Every Week, orEvery Month. The default isNo Rotation. - In the Configure Authentication section, enter the username and password for your Elasticsearch account.

- In the Select Tags to Forward section:

a. Select whether you want All tags, No tags, or Specific Tags to be included.

b. Select whether you want to Include or Exclude specific tags, and specify which tags to include or exclude. - Click Save.

On the Log Forwarding page, hover over the status for a destination to see the percentage of logs that matched the filter criteria and have been forwarded in the past hour.

Edit a destination

- Navigate to Log Forwarding.

- Select Custom Destinations to view a list of all existing destinations.

- Click the Edit button for the destination you want to edit.

- Make the changes on the configuration page.

- Click Save.

Delete a destination

- Navigate to Log Forwarding.

- Select Custom Destinations to view a list of all existing destinations.

- Click the Delete button for the destination that you want to delete, and click Confirm. This removes the destination from the configured list of destinations and logs are no longer forwarded to it.

Further reading

Documentation, liens et articles supplémentaires utiles: