- Essentials

- Getting Started

- Datadog

- Datadog Site

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Integrations

- Containers

- Dashboards

- Monitors

- Logs

- APM Tracing

- Profiler

- Tags

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Synthetic Monitoring

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Test Impact Analysis

- Code Analysis

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Integrations

- OpenTelemetry

- Developers

- Authorization

- DogStatsD

- Custom Checks

- Integrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- Service Checks

- IDE Plugins

- Community

- Guides

- Administrator's Guide

- API

- Datadog Mobile App

- CoScreen

- Cloudcraft

- In The App

- Dashboards

- Notebooks

- DDSQL Editor

- Sheets

- Monitors and Alerting

- Infrastructure

- Metrics

- Watchdog

- Bits AI

- Service Catalog

- API Catalog

- Error Tracking

- Service Management

- Infrastructure

- Application Performance

- APM

- Continuous Profiler

- Database Monitoring

- Data Streams Monitoring

- Data Jobs Monitoring

- Digital Experience

- Real User Monitoring

- Product Analytics

- Synthetic Testing and Monitoring

- Continuous Testing

- Software Delivery

- CI Visibility

- CD Visibility

- Test Optimization

- Code Analysis

- Quality Gates

- DORA Metrics

- Security

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- AI Observability

- Log Management

- Observability Pipelines

- Log Management

- Administration

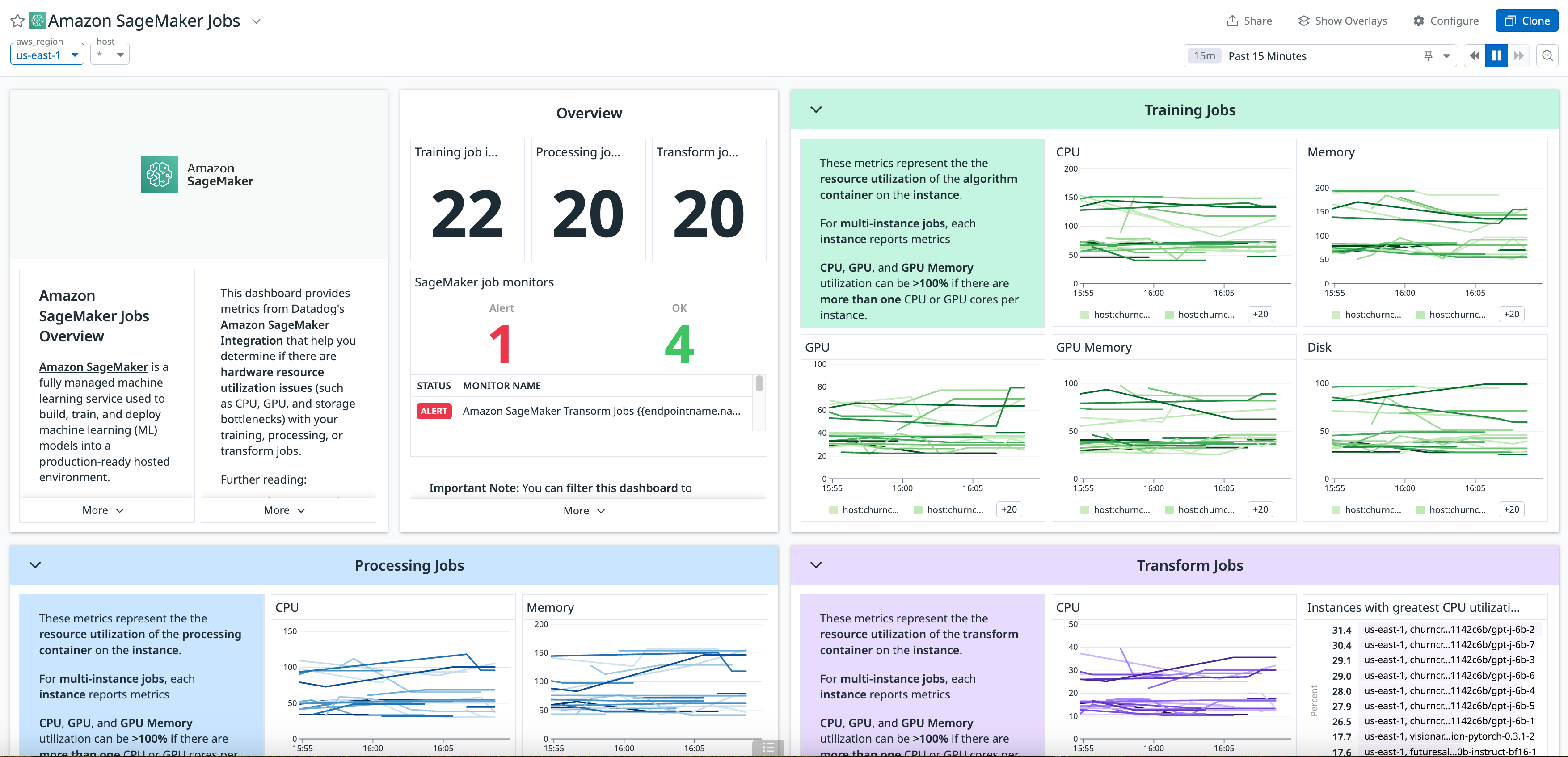

Amazon SageMaker

Overview

Amazon SageMaker is a fully managed machine learning service. With Amazon SageMaker, data scientists and developers can build and train machine learning models, and then directly deploy them into a production-ready hosted environment.

Enable this integration to see all your SageMaker metrics in Datadog.

Setup

Installation

If you haven’t already, set up the Amazon Web Services integration first.

Metric collection

- In the AWS integration page, ensure that

SageMakeris enabled under theMetric Collectiontab. - Install the Datadog - Amazon SageMaker integration.

Log collection

Enable logging

Configure Amazon SageMaker to send logs either to a S3 bucket or to CloudWatch.

Note: If you log to a S3 bucket, make sure that amazon_sagemaker is set as Target prefix.

Send logs to Datadog

If you haven’t already, set up the Datadog log collection AWS Lambda function.

Once the lambda function is installed, manually add a trigger on the S3 bucket or CloudWatch log group that contains your Amazon SageMaker logs in the AWS console:

Data Collected

Metrics

| aws.sagemaker.consumed_read_requests_units (count) | The average number of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.maximum (count) | The maximum number of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.minimum (count) | The minimum number of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.p90 (count) | The 90th percentile of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.p95 (count) | The 95th percentile of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.p99 (count) | The 99th percentile of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.sample_count (count) | The sample count of consumed read units over the specified time period. |

| aws.sagemaker.consumed_read_requests_units.sum (count) | The sum of consumed read units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units (count) | The average number of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.maximum (count) | The maximum number of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.minimum (count) | The minimum number of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.p90 (count) | The 90th percentile of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.p95 (count) | The 95th percentile of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.p99 (count) | The 99th percentile of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.sample_count (count) | The sample count of consumed write units over the specified time period. |

| aws.sagemaker.consumed_write_requests_units.sum (count) | The sum of consumed write units over the specified time period. |

| aws.sagemaker.endpoints.cpuutilization (gauge) | The average percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.cpuutilization.maximum (gauge) | The maximum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.cpuutilization.minimum (gauge) | The minimum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.disk_utilization (gauge) | The average percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.endpoints.disk_utilization.maximum (gauge) | The maximum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.endpoints.disk_utilization.minimum (gauge) | The minimum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.endpoints.gpu_memory_utilization (gauge) | The average percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.gpu_memory_utilization.maximum (gauge) | The maximum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.gpu_memory_utilization.minimum (gauge) | The minimum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.gpu_utilization (gauge) | The average percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.gpu_utilization.maximum (gauge) | The maximum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.gpu_utilization.minimum (gauge) | The minimum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.loaded_model_count (count) | The number of models loaded in the containers of the multi-model endpoint. This metric is emitted per instance. |

| aws.sagemaker.endpoints.loaded_model_count.maximum (count) | The maximum number of models loaded in the containers of the multi-model endpoint. This metric is emitted per instance. |

| aws.sagemaker.endpoints.loaded_model_count.minimum (count) | The minimum number of models loaded in the containers of the multi-model endpoint. This metric is emitted per instance. |

| aws.sagemaker.endpoints.loaded_model_count.sample_count (count) | The sample count of models loaded in the containers of the multi-model endpoint. This metric is emitted per instance. |

| aws.sagemaker.endpoints.loaded_model_count.sum (count) | The sum of models loaded in the containers of the multi-model endpoint. This metric is emitted per instance. |

| aws.sagemaker.endpoints.memory_utilization (gauge) | The average percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.memory_utilization.maximum (gauge) | The maximum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.endpoints.memory_utilization.minimum (gauge) | The minimum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.invocation_4xx_errors (count) | The average number of InvokeEndpoint requests where the model returned a 4xx HTTP response code. Shown as request |

| aws.sagemaker.invocation_4xx_errors.sum (count) | The sum of the number of InvokeEndpoint requests where the model returned a 4xx HTTP response code. Shown as request |

| aws.sagemaker.invocation_5xx_errors (count) | The average number of InvokeEndpoint requests where the model returned a 5xx HTTP response code. Shown as request |

| aws.sagemaker.invocation_5xx_errors.sum (count) | The sum of the number of InvokeEndpoint requests where the model returned a 5xx HTTP response code. Shown as request |

| aws.sagemaker.invocation_model_errors (count) | The number of model invocation requests which did not result in 2XX HTTP response. This includes 4XX/5XX status codes, low-level socket errors, malformed HTTP responses, and request timeouts. |

| aws.sagemaker.invocations (count) | The number of InvokeEndpoint requests sent to a model endpoint. Shown as request |

| aws.sagemaker.invocations.maximum (count) | The maximum of the number of InvokeEndpoint requests sent to a model endpoint. Shown as request |

| aws.sagemaker.invocations.minimum (count) | The minimum of the number of InvokeEndpoint requests sent to a model endpoint. Shown as request |

| aws.sagemaker.invocations.sample_count (count) | The sample count of the number of InvokeEndpoint requests sent to a model endpoint. Shown as request |

| aws.sagemaker.invocations_per_instance (count) | The number of invocations sent to a model normalized by InstanceCount in each ProductionVariant. |

| aws.sagemaker.invocations_per_instance.sum (count) | The sum of invocations sent to a model normalized by InstanceCount in each ProductionVariant. |

| aws.sagemaker.jobs_failed (count) | The average number of occurrences a single labeling job failed. Shown as job |

| aws.sagemaker.jobs_failed.sample_count (count) | The sample count of occurrences a single labeling job failed. Shown as job |

| aws.sagemaker.jobs_failed.sum (count) | The sum of occurrences a single labeling job failed. Shown as job |

| aws.sagemaker.jobs_stopped (count) | The average number of occurrences a single labeling job was stopped. Shown as job |

| aws.sagemaker.jobs_stopped.sample_count (count) | The sample count of occurrences a single labeling job was stopped. Shown as job |

| aws.sagemaker.jobs_stopped.sum (count) | The sum of occurrences a single labeling job was stopped. Shown as job |

| aws.sagemaker.labelingjobs.dataset_objects_auto_annotated (count) | The average number of dataset objects auto-annotated in a labeling job. |

| aws.sagemaker.labelingjobs.dataset_objects_auto_annotated.max (count) | The maximum number of dataset objects auto-annotated in a labeling job. |

| aws.sagemaker.labelingjobs.dataset_objects_human_annotated (count) | The average number of dataset objects annotated by a human in a labeling job. |

| aws.sagemaker.labelingjobs.dataset_objects_human_annotated.max (count) | The maximum number of dataset objects annotated by a human in a labeling job. |

| aws.sagemaker.labelingjobs.dataset_objects_labeling_failed (count) | The number of dataset objects that failed labeling in a labeling job. |

| aws.sagemaker.labelingjobs.dataset_objects_labeling_failed.max (count) | The number of dataset objects that failed labeling in a labeling job. |

| aws.sagemaker.labelingjobs.jobs_succeeded (count) | The average number of occurrences a single labeling job succeeded. Shown as job |

| aws.sagemaker.labelingjobs.jobs_succeeded.sample_count (count) | The sample count of occurrences a single labeling job succeeded. Shown as job |

| aws.sagemaker.labelingjobs.jobs_succeeded.sum (count) | The sum of occurrences a single labeling job succeeded. Shown as job |

| aws.sagemaker.labelingjobs.total_dataset_objects_labeled (count) | The average number of dataset objects labeled successfully in a labeling job. |

| aws.sagemaker.labelingjobs.total_dataset_objects_labeled.maximum (count) | The maximum number of dataset objects labeled successfully in a labeling job. |

| aws.sagemaker.model_cache_hit (count) | The number of InvokeEndpoint requests sent to the multi-model endpoint for which the model was already loaded. Shown as request |

| aws.sagemaker.model_cache_hit.maximum (count) | The maximum number of InvokeEndpoint requests sent to the multi-model endpoint for which the model was already loaded. Shown as request |

| aws.sagemaker.model_cache_hit.minimum (count) | The minimum number of InvokeEndpoint requests sent to the multi-model endpoint for which the model was already loaded. Shown as request |

| aws.sagemaker.model_cache_hit.sample_count (count) | The sample count of InvokeEndpoint requests sent to the multi-model endpoint for which the model was already loaded. Shown as request |

| aws.sagemaker.model_cache_hit.sum (count) | The sum of InvokeEndpoint requests sent to the multi-model endpoint for which the model was already loaded. Shown as request |

| aws.sagemaker.model_downloading_time (gauge) | The interval of time that it takes to download the model from Amazon Simple Storage Service (Amazon S3). Shown as microsecond |

| aws.sagemaker.model_downloading_time.maximum (gauge) | The maximum interval of time that it takes to download the model from Amazon Simple Storage Service (Amazon S3). Shown as microsecond |

| aws.sagemaker.model_downloading_time.minimum (gauge) | The minimum interval of time that it takes to download the model from Amazon Simple Storage Service (Amazon S3). Shown as microsecond |

| aws.sagemaker.model_downloading_time.sample_count (count) | The sample count interval of time that it takes to download the model from Amazon Simple Storage Service (Amazon S3). Shown as microsecond |

| aws.sagemaker.model_downloading_time.sum (gauge) | The sum interval of time that it takes to download the model from Amazon Simple Storage Service (Amazon S3). Shown as microsecond |

| aws.sagemaker.model_latency (gauge) | The average interval of time taken by a model to respond as viewed from Amazon SageMaker. Shown as microsecond |

| aws.sagemaker.model_latency.maximum (gauge) | The maximum interval of time taken by a model to respond as viewed from Amazon SageMaker. Shown as microsecond |

| aws.sagemaker.model_latency.minimum (gauge) | The minimum interval of time taken by a model to respond as viewed from Amazon SageMaker. Shown as microsecond |

| aws.sagemaker.model_latency.sample_count (count) | The sample count interval of time taken by a model to respond as viewed from Amazon SageMaker. Shown as microsecond |

| aws.sagemaker.model_latency.sum (gauge) | The sum of the interval of time taken by a model to respond as viewed from Amazon SageMaker. Shown as microsecond |

| aws.sagemaker.model_loading_time (gauge) | The interval of time that it takes to load the model through the container's LoadModel API call. Shown as microsecond |

| aws.sagemaker.model_loading_time.maximum (gauge) | The maximum interval of time that it takes to load the model through the container's LoadModel API call. Shown as microsecond |

| aws.sagemaker.model_loading_time.minimum (gauge) | The minimum interval of time that it takes to load the model through the container's LoadModel API call. Shown as microsecond |

| aws.sagemaker.model_loading_time.sample_count (count) | The sample count interval of time that it takes to load the model through the container's LoadModel API call. Shown as microsecond |

| aws.sagemaker.model_loading_time.sum (gauge) | The sum interval of time that it takes to load the model through the container's LoadModel API call. Shown as microsecond |

| aws.sagemaker.model_loading_wait_time (gauge) | The interval of time that an invocation request has waited for the target model to be downloaded, or loaded, or both in order to perform inference. Shown as microsecond |

| aws.sagemaker.model_loading_wait_time.maximum (gauge) | The maximum interval of time that an invocation request has waited for the target model to be downloaded, or loaded, or both in order to perform inference. Shown as microsecond |

| aws.sagemaker.model_loading_wait_time.minimum (gauge) | The minimum interval of time that an invocation request has waited for the target model to be downloaded, or loaded, or both in order to perform inference. Shown as microsecond |

| aws.sagemaker.model_loading_wait_time.sample_count (count) | The sample count interval of time that an invocation request has waited for the target model to be downloaded, or loaded, or both in order to perform inference. Shown as microsecond |

| aws.sagemaker.model_loading_wait_time.sum (gauge) | The sum interval of time that an invocation request has waited for the target model to be downloaded, or loaded, or both in order to perform inference. Shown as microsecond |

| aws.sagemaker.model_setup_time (gauge) | The average time it takes to launch new compute resources for a serverless endpoint. Shown as microsecond |

| aws.sagemaker.model_setup_time.maximum (gauge) | The maximum interval of time it takes to launch new compute resources for a serverless endpoint. Shown as microsecond |

| aws.sagemaker.model_setup_time.minimum (gauge) | The minimum interval of time it takes to launch new compute resources for a serverless endpoint. Shown as microsecond |

| aws.sagemaker.model_setup_time.sample_count (count) | The sample_count of the amount of time it takes to launch new compute resources for a serverless endpoint. Shown as microsecond |

| aws.sagemaker.model_setup_time.sum (gauge) | The total amount of time takes to launch new compute resources for a serverless endpoint. Shown as microsecond |

| aws.sagemaker.model_unloading_time (gauge) | The interval of time that it takes to unload the model through the container's UnloadModel API call. Shown as microsecond |

| aws.sagemaker.model_unloading_time.maximum (gauge) | The maximum interval of time that it takes to unload the model through the container's UnloadModel API call. Shown as microsecond |

| aws.sagemaker.model_unloading_time.minimum (gauge) | The minimum interval of time that it takes to unload the model through the container's UnloadModel API call. Shown as microsecond |

| aws.sagemaker.model_unloading_time.sample_count (count) | The sample count interval of time that it takes to unload the model through the container's UnloadModel API call. Shown as microsecond |

| aws.sagemaker.model_unloading_time.sum (gauge) | The sum interval of time that it takes to unload the model through the container's UnloadModel API call. Shown as microsecond |

| aws.sagemaker.modelbuildingpipeline.execution_duration (gauge) | The average duration in milliseconds that the pipeline execution ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.execution_duration.maximum (gauge) | The maximum duration in milliseconds that the pipeline execution ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.execution_duration.minimum (gauge) | The minimum duration in milliseconds that the pipeline execution ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.execution_duration.sample_count (count) | The sample count duration in milliseconds that the pipeline execution ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.execution_duration.sum (gauge) | The sum duration in milliseconds that the pipeline execution ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.execution_failed (count) | The average number of steps that failed. |

| aws.sagemaker.modelbuildingpipeline.execution_failed.sum (count) | The sum of steps that failed. |

| aws.sagemaker.modelbuildingpipeline.execution_started (count) | The average number of pipeline executions that started. |

| aws.sagemaker.modelbuildingpipeline.execution_started.sum (count) | The sum of pipeline executions that started. |

| aws.sagemaker.modelbuildingpipeline.execution_stopped (count) | The average number of pipeline executions that stopped. |

| aws.sagemaker.modelbuildingpipeline.execution_stopped.sum (count) | The sum of pipeline executions that stopped. |

| aws.sagemaker.modelbuildingpipeline.execution_succeeded (count) | The average number of pipeline executions that succeeded. |

| aws.sagemaker.modelbuildingpipeline.execution_succeeded.sum (count) | The sum of pipeline executions that succeeded. |

| aws.sagemaker.modelbuildingpipeline.step_duration (gauge) | The average duration in milliseconds that the step ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.step_duration.maximum (gauge) | The maximum duration in milliseconds that the step ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.step_duration.minimum (gauge) | The minimum duration in milliseconds that the step ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.step_duration.sample_count (count) | The sample count duration in milliseconds that the step ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.step_duration.sum (gauge) | The sum duration in milliseconds that the step ran. Shown as millisecond |

| aws.sagemaker.modelbuildingpipeline.step_failed (count) | The average number of steps that failed. |

| aws.sagemaker.modelbuildingpipeline.step_failed.sum (count) | The sum of steps that failed. |

| aws.sagemaker.modelbuildingpipeline.step_started (count) | The average number of steps that started. |

| aws.sagemaker.modelbuildingpipeline.step_started.sum (count) | The sum of steps that started. |

| aws.sagemaker.modelbuildingpipeline.step_stopped (count) | The average number of steps that stopped. |

| aws.sagemaker.modelbuildingpipeline.step_stopped.sum (count) | The sum of steps that stopped. |

| aws.sagemaker.modelbuildingpipeline.step_succeeded (count) | The average number of steps that succeeded. |

| aws.sagemaker.modelbuildingpipeline.step_succeeded.sum (count) | The sum of steps that succeeded. |

| aws.sagemaker.overhead_latency (gauge) | The average interval of time added to the time taken to respond to a client request by Amazon SageMaker overheads. Shown as microsecond |

| aws.sagemaker.overhead_latency.maximum (gauge) | The maximum interval of time added to the time taken to respond to a client request by Amazon SageMaker overheads. Shown as microsecond |

| aws.sagemaker.overhead_latency.minimum (gauge) | The minimum interval of time added to the time taken to respond to a client request by Amazon SageMaker overheads. Shown as microsecond |

| aws.sagemaker.overhead_latency.sample_count (count) | The sample count of the interval of time added to the time taken to respond to a client request by Amazon SageMaker overheads. Shown as microsecond |

| aws.sagemaker.overhead_latency.sum (gauge) | The sum of the interval of time added to the time taken to respond to a client request by Amazon SageMaker overheads. Shown as microsecond |

| aws.sagemaker.processingjobs.cpuutilization (gauge) | The average percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.cpuutilization.maximum (gauge) | The maximum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.cpuutilization.minimum (gauge) | The minimum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.disk_utilization (gauge) | The average percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.processingjobs.disk_utilization.maximum (gauge) | The maximum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.processingjobs.disk_utilization.minimum (gauge) | The minimum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.processingjobs.gpu_memory_utilization (gauge) | The average percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.gpu_memory_utilization.maximum (gauge) | The maximum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.gpu_memory_utilization.minimum (gauge) | The minimum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.gpu_utilization (gauge) | The average percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.gpu_utilization.maximum (gauge) | The maximum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.gpu_utilization.minimum (gauge) | The minimum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.memory_utilization (gauge) | The average percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.memory_utilization.maximum (gauge) | The maximum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.processingjobs.memory_utilization.minimum (gauge) | The minimum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.tasks_returned (count) | The average number of occurrences a single task was returned. |

| aws.sagemaker.tasks_returned.sample_count (count) | The sample count of occurrences a single task was returned. |

| aws.sagemaker.tasks_returned.sum (count) | The sum of occurrences a single task was returned. |

| aws.sagemaker.trainingjobs.cpuutilization (gauge) | The average percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.cpuutilization.maximum (gauge) | The maximum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.cpuutilization.minimum (gauge) | The minimum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.disk_utilization (gauge) | The average percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.trainingjobs.disk_utilization.maximum (gauge) | The maximum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.trainingjobs.disk_utilization.minimum (gauge) | The minimum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_memory_utilization (gauge) | The average percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_memory_utilization.maximum (gauge) | The maximum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_memory_utilization.minimum (gauge) | The minimum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_utilization (gauge) | The average percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_utilization.maximum (gauge) | The maximum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.gpu_utilization.minimum (gauge) | The minimum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.memory_utilization (gauge) | The average percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.memory_utilization.maximum (gauge) | The maximum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.trainingjobs.memory_utilization.minimum (gauge) | The minimum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.cpuutilization (gauge) | The average percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.cpuutilization.maximum (gauge) | The maximum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.cpuutilization.minimum (gauge) | The minimum percentage of CPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.disk_utilization (gauge) | The average percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.transformjobs.disk_utilization.maximum (gauge) | The maximum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.transformjobs.disk_utilization.minimum (gauge) | The minimum percentage of disk space used by the containers on an instance uses. Shown as percent |

| aws.sagemaker.transformjobs.gpu_memory_utilization (gauge) | The average percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.gpu_memory_utilization.maximum (gauge) | The maximum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.gpu_memory_utilization.minimum (gauge) | The minimum percentage of GPU memory used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.gpu_utilization (gauge) | The average percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.gpu_utilization.maximum (gauge) | The maximum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.gpu_utilization.minimum (gauge) | The minimum percentage of GPU units that are used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.memory_utilization (gauge) | The average percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.memory_utilization.maximum (gauge) | The maximum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.transformjobs.memory_utilization.minimum (gauge) | The minimum percentage of memory that is used by the containers on an instance. Shown as percent |

| aws.sagemaker.workteam.active_workers (count) | The average number of single active workers on a private work team that submitted, released, or declined a task. |

| aws.sagemaker.workteam.active_workers.sample_count (count) | The sample count of single active workers on a private work team that submitted, released, or declined a task. |

| aws.sagemaker.workteam.active_workers.sum (count) | The sum of single active workers on a private work team that submitted, released, or declined a task. |

| aws.sagemaker.workteam.tasks_accepted (count) | The average number of occurrences a single task was accepted by a worker. |

| aws.sagemaker.workteam.tasks_accepted.sample_count (count) | The sample count of occurrences a single task was accepted by a worker. |

| aws.sagemaker.workteam.tasks_accepted.sum (count) | The sum of occurrences a single task was accepted by a worker. |

| aws.sagemaker.workteam.tasks_declined (count) | The average number of occurrences a single task was declined by a worker. |

| aws.sagemaker.workteam.tasks_declined.sample_count (count) | The sample count of occurrences a single task was declined by a worker. |

| aws.sagemaker.workteam.tasks_declined.sum (count) | The sum of occurrences a single task was declined by a worker. |

| aws.sagemaker.workteam.tasks_submitted (count) | The average number of occurrences a single task was submitted/completed by a private worker. |

| aws.sagemaker.workteam.tasks_submitted.sample_count (count) | The average number of occurrences a single task was submitted/completed by a private worker. |

| aws.sagemaker.workteam.tasks_submitted.sum (count) | The average number of occurrences a single task was submitted/completed by a private worker. |

| aws.sagemaker.workteam.time_spent (count) | The average time spent on a task completed by a private worker. |

| aws.sagemaker.workteam.time_spent.sample_count (count) | The average time spent on a task completed by a private worker. |

| aws.sagemaker.workteam.time_spent.sum (count) | The average time spent on a task completed by a private worker. |

Events

The Amazon SageMaker integration does not include any events.

Service Checks

The Amazon SageMaker integration does not include any service checks.

Out-of-the-box monitoring

Datadog provides out-of-the-box dashboards for your SageMaker endpoints and jobs.

SageMaker endpoints

Use the SageMaker endpoints dashboard to help you immediately start monitoring the health and performance of your SageMaker endpoints with no additional configuration. Determine which endpoints have errors, higher-than-expected latency, or traffic spikes. Review and correct your instance type and scaling policy selections using CPU, GPU, memory, and disk utilization metrics.

SageMaker jobs

You can use the SageMaker jobs dashboard to gain insight into the resource utilization (for example, finding CPU, GPU, and storage bottlenecks) of your training, processing, or transform jobs. Use this information to optimize your compute instances.

Further reading

Additional helpful documentation, links, and articles:

Troubleshooting

Need help? Contact Datadog support.