- Principales informations

- Getting Started

- Datadog

- Site Datadog

- DevSecOps

- Serverless for AWS Lambda

- Agent

- Intégrations

- Conteneurs

- Dashboards

- Monitors

- Logs

- Tracing

- Profileur

- Tags

- API

- Service Catalog

- Session Replay

- Continuous Testing

- Surveillance Synthetic

- Incident Management

- Database Monitoring

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- Learning Center

- Support

- Glossary

- Standard Attributes

- Guides

- Agent

- Intégrations

- OpenTelemetry

- Développeurs

- Authorization

- DogStatsD

- Checks custom

- Intégrations

- Create an Agent-based Integration

- Create an API Integration

- Create a Log Pipeline

- Integration Assets Reference

- Build a Marketplace Offering

- Create a Tile

- Create an Integration Dashboard

- Create a Recommended Monitor

- Create a Cloud SIEM Detection Rule

- OAuth for Integrations

- Install Agent Integration Developer Tool

- UI Extensions

- Checks de service

- IDE Plugins

- Communauté

- Guides

- API

- CoScreen

- Cloudcraft

- In The App

- Service Management

- Infrastructure

- Application Performance

- APM

- Profileur en continu

- Database Monitoring

- Universal Service Monitoring

- Data Streams Monitoring

- Data Jobs Monitoring

- Software Delivery

- Log Management

- Pipelines d'observabilité

- Log Management

- Securité

- Security Overview

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- Digital Experience

- RUM et Session Replay

- Surveillance Synthetic

- Continuous Testing

- Mobile Application Testing

- Administration

LLM Observability Quickstart

Cette page n'est pas encore disponible en français, sa traduction est en cours.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

Si vous avez des questions ou des retours sur notre projet de traduction actuel, n'hésitez pas à nous contacter.

LLM Observability is not available in the US1-FED site.

Our quickstart docs make use of the LLM Observability SDK for Python. For detailed usage, see the SDK documentation. If your application is written in another language, you can create traces by calling the API instead.

Jupyter notebook quickstart

To run examples from Jupyter notebooks, see the LLM Observability Jupyter Notebooks repository.

Command-line quickstart

Use the steps below to run a simple Python script that generates an LLM Observability trace.

Prerequisites

- LLM Observability requires a Datadog API key (see the instructions for creating an API key).

- The example script below uses OpenAI, but you can modify it to use a different provider. To run the script as written, you need:

- An OpenAI API key stored in your environment as

OPENAI_API_KEY. To create one, see Account Setup and Set up your API key in the OpenAI documentation. - The OpenAI Python library installed. See Setting up Python in the OpenAI documentation for instructions.

- An OpenAI API key stored in your environment as

1. Install the SDK

Install the following ddtrace and openai packages:

pip install ddtrace

pip install openai2. Create the script

The Python script below makes a single OpenAI call. Save it as quickstart.py.

quickstart.py

import os

from openai import OpenAI

oai_client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY"))

completion = oai_client.chat.completions.create(

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a helpful customer assistant for a furniture store."},

{"role": "user", "content": "I'd like to buy a chair for my living room."},

],

)3. Run the script

Run the Python script with the following shell command, sending a trace of the OpenAI call to Datadog:

DD_LLMOBS_ENABLED=1 DD_LLMOBS_ML_APP=onboarding-quickstart \

DD_API_KEY=<YOUR_DATADOG_API_KEY> DD_SITE=<YOUR_DATADOG_SITE> \

DD_LLMOBS_AGENTLESS_ENABLED=1 ddtrace-run python quickstart.pyFor details on the required environment variables, see the SDK documentation.

4. View the trace

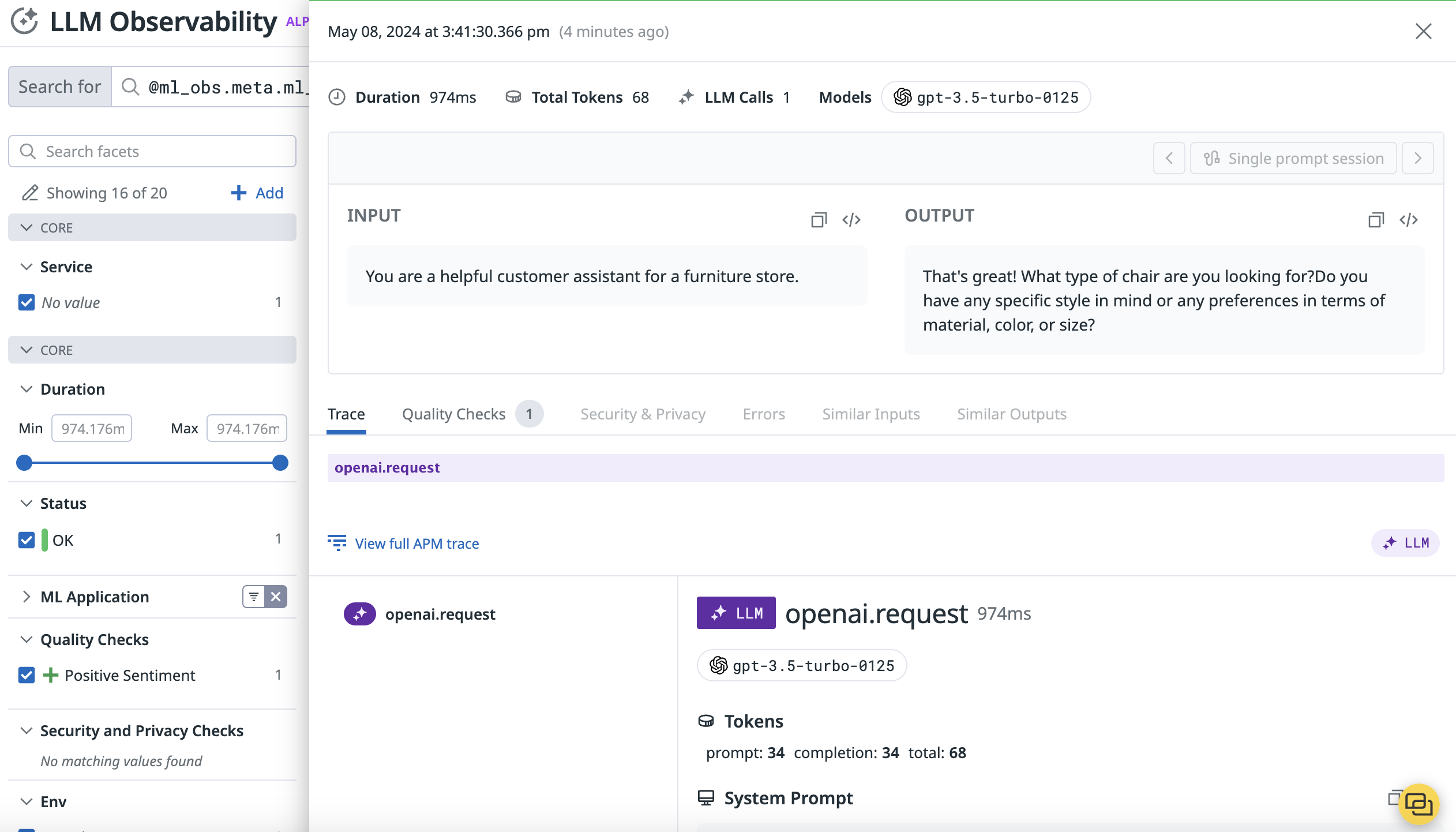

A trace of your LLM call should appear in the Traces tab of LLM Observability in Datadog.

The trace you see is composed of a single LLM span. The ddtrace-run command automatically traces your LLM calls from Datadog’s list of supported integrations.

If your application consists of more elaborate prompting or complex chains or workflows involving LLMs, you can trace it using the instrumentation guide and the SDK documentation.