- Esenciales

- Empezando

- Datadog

- Sitio web de Datadog

- DevSecOps

- Serverless para Lambda AWS

- Agent

- Integraciones

- Contenedores

- Dashboards

- Monitores

- Logs

- Rastreo de APM

- Generador de perfiles

- Etiquetas (tags)

- API

- Catálogo de servicios

- Session Replay

- Continuous Testing

- Monitorización Synthetic

- Gestión de incidencias

- Monitorización de bases de datos

- Cloud Security Management

- Cloud SIEM

- Application Security Management

- Workflow Automation

- CI Visibility

- Test Visibility

- Intelligent Test Runner

- Análisis de código

- Centro de aprendizaje

- Compatibilidad

- Glosario

- Atributos estándar

- Guías

- Agent

- Uso básico del Agent

- Arquitectura

- IoT

- Plataformas compatibles

- Recopilación de logs

- Configuración

- Configuración remota

- Automatización de flotas

- Actualizar el Agent

- Solucionar problemas

- Detección de nombres de host en contenedores

- Modo de depuración

- Flare del Agent

- Estado del check del Agent

- Problemas de NTP

- Problemas de permisos

- Problemas de integraciones

- Problemas del sitio

- Problemas de Autodiscovery

- Problemas de contenedores de Windows

- Configuración del tiempo de ejecución del Agent

- Consumo elevado de memoria o CPU

- Guías

- Seguridad de datos

- Integraciones

- OpenTelemetry

- Desarrolladores

- Autorización

- DogStatsD

- Checks personalizados

- Integraciones

- Crear una integración basada en el Agent

- Crear una integración API

- Crear un pipeline de logs

- Referencia de activos de integración

- Crear una oferta de mercado

- Crear un cuadro

- Crear un dashboard de integración

- Crear un monitor recomendado

- Crear una regla de detección Cloud SIEM

- OAuth para integraciones

- Instalar la herramienta de desarrollo de integraciones del Agente

- Checks de servicio

- Complementos de IDE

- Comunidad

- Guías

- API

- Aplicación móvil de Datadog

- CoScreen

- Cloudcraft

- En la aplicación

- Dashboards

- Notebooks

- Editor DDSQL

- Hojas

- Monitores y alertas

- Infraestructura

- Métricas

- Watchdog

- Bits AI

- Catálogo de servicios

- Catálogo de APIs

- Error Tracking

- Gestión de servicios

- Objetivos de nivel de servicio (SLOs)

- Gestión de incidentes

- De guardia

- Gestión de eventos

- Gestión de casos

- Workflow Automation

- App Builder

- Infraestructura

- Universal Service Monitoring

- Contenedores

- Serverless

- Monitorización de red

- Coste de la nube

- Rendimiento de las aplicaciones

- APM

- Términos y conceptos de APM

- Instrumentación de aplicación

- Recopilación de métricas de APM

- Configuración de pipelines de trazas

- Correlacionar trazas (traces) y otros datos de telemetría

- Trace Explorer

- Observabilidad del servicio

- Instrumentación dinámica

- Error Tracking

- Seguridad de los datos

- Guías

- Solucionar problemas

- Continuous Profiler

- Database Monitoring

- Gastos generales de integración del Agent

- Arquitecturas de configuración

- Configuración de Postgres

- Configuración de MySQL

- Configuración de SQL Server

- Configuración de Oracle

- Configuración de MongoDB

- Conexión de DBM y trazas

- Datos recopilados

- Explorar hosts de bases de datos

- Explorar métricas de consultas

- Explorar ejemplos de consulta

- Solucionar problemas

- Guías

- Data Streams Monitoring

- Data Jobs Monitoring

- Experiencia digital

- Real User Monitoring

- Monitorización del navegador

- Configuración

- Configuración avanzada

- Datos recopilados

- Monitorización del rendimiento de páginas

- Monitorización de signos vitales de rendimiento

- Monitorización del rendimiento de recursos

- Recopilación de errores del navegador

- Rastrear las acciones de los usuarios

- Señales de frustración

- Error Tracking

- Solucionar problemas

- Monitorización de móviles y TV

- Plataforma

- Session Replay

- Exploración de datos de RUM

- Feature Flag Tracking

- Error Tracking

- Guías

- Seguridad de los datos

- Monitorización del navegador

- Análisis de productos

- Pruebas y monitorización de Synthetics

- Continuous Testing

- Entrega de software

- CI Visibility

- CD Visibility

- Test Visibility

- Configuración

- Tests en contenedores

- Búsqueda y gestión

- Explorador

- Monitores

- Flujos de trabajo de desarrolladores

- Cobertura de código

- Instrumentar tests de navegador con RUM

- Instrumentar tests de Swift con RUM

- Detección temprana de defectos

- Reintentos automáticos de tests

- Correlacionar logs y tests

- Guías

- Solucionar problemas

- Intelligent Test Runner

- Code Analysis

- Quality Gates

- Métricas de DORA

- Seguridad

- Información general de seguridad

- Cloud SIEM

- Cloud Security Management

- Application Security Management

- Observabilidad de la IA

- Log Management

- Observability Pipelines

- Gestión de logs

- Administración

- Gestión de cuentas

- Seguridad de los datos

- Sensitive Data Scanner

- Ayuda

Log Workspaces

This page is not yet available in Spanish. We are working on its translation.

If you have any questions or feedback about our current translation project, feel free to reach out to us!

If you have any questions or feedback about our current translation project, feel free to reach out to us!

Log Workspaces is in private beta.

Request AccessOverview

During an incident investigation, you might need to run complex queries, such as combining attributes from multiple log sources or transforming log data, to analyze your logs. Use Log Workspaces to run queries to:

- Correlate multiple data sources

- Aggregate multiple levels of data

- Join data across multiple log sources and other datasets

- Extract data or add a calculated field at query time

- Add visualizations for your transformed datasets

Create a workspace and add a data source

You can create a workspace from the Workspaces page or from the Log Explorer.

On the Log Workspaces page:

- Click New Workspace.

- Click the Data source tile.

- Enter a query. The reserved attributes of the filtered logs are added as columns.

In the Log Explorer:

- Enter a query.

- Click More, next to Download as CSV, and select Open in Workspace.

- The workspace adds the log query to a data source cell. By default, the columns in Log Explorer are added to the data source cell.

Add a column to your workspace

In addition to the default columns, you can add your own columns to your workspace:

- From your workspace cell, click on a log to open the detail side panel.

- Click the attribute you want to add as a column.

- From the pop up option, select Add “@your_column " to “your workspace” dataset.

Analyze, transform, and visualize your logs

You can add the following cells to:

- Include additional data sources such as reference tables

- Use SQL to join data

- Transform, correlate, and visualize the data

Cells that depend on other cells are automatically updated when one of the cells it depends on is changed.

At the bottom of your workspace, click any of the cell tiles to add it to your workspace. After adding a cell, you can click the dataset on the left side of your workspace page to go directly to that cell.

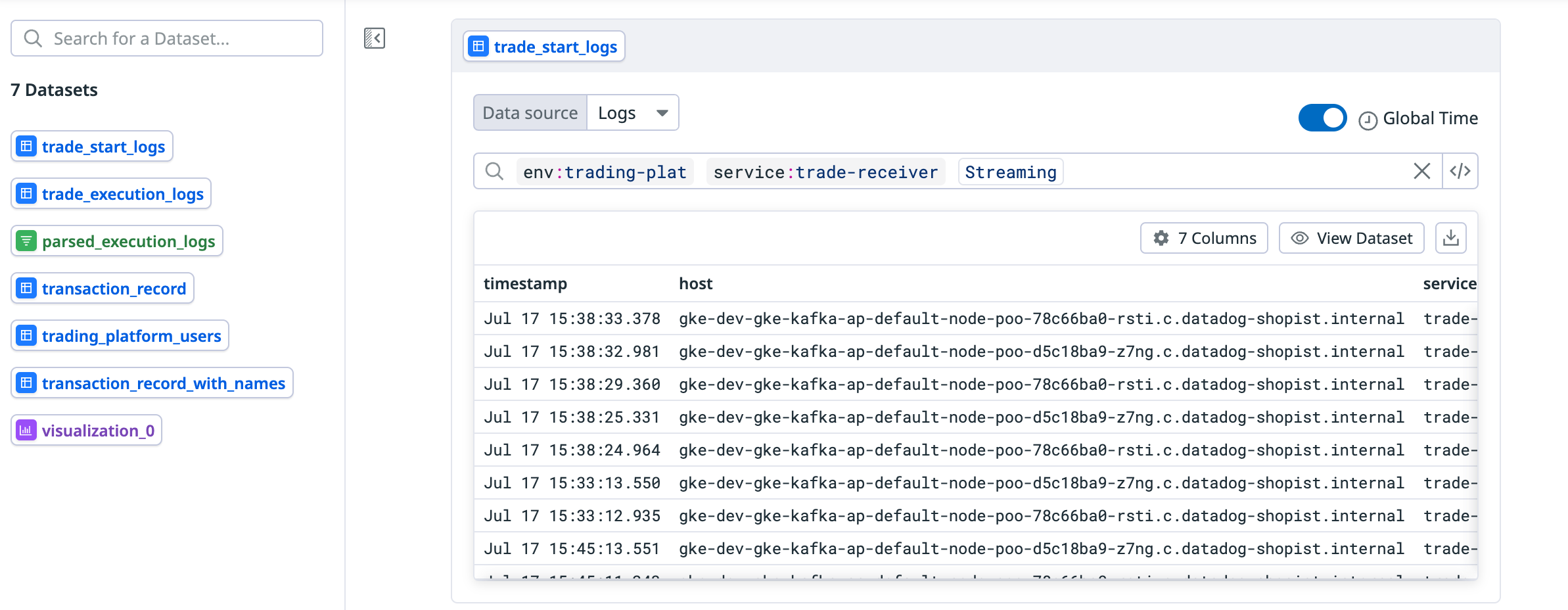

Data source cell

You can add a logs query or a reference table as a data source.

- Click on the Data source tile.

- To add a reference table:

- Select Reference table in the Data source dropdown.

- Select the reference table you want to use.

- To add a logs data source:

- Enter a query. The reserved attributes of the filtered logs are added as columns.

- Click datasource_x at the top of the cell to rename the data source.

- Click Columns to see the columns available. Click as for a column to add an alias.

- To add additional columns to the dataset:

a. Click on a log.

b. Click the cog next to the facet you want to add as a column.

c. Select Add…to…dataset.

- To add a reference table:

- Click the download icon to export the dataset as a CSV.

Analysis cell

- Click the Analysis tile to add a cell and use SQL to query the data from any of the data sources. You can use natural language or SQL to query your data . An example using natural language:

select only timestamp, customer id, transaction id from the transaction logs. - If you are using SQL, click Run to run the SQL commands.

- Click the download icon to export the dataset as a CSV.

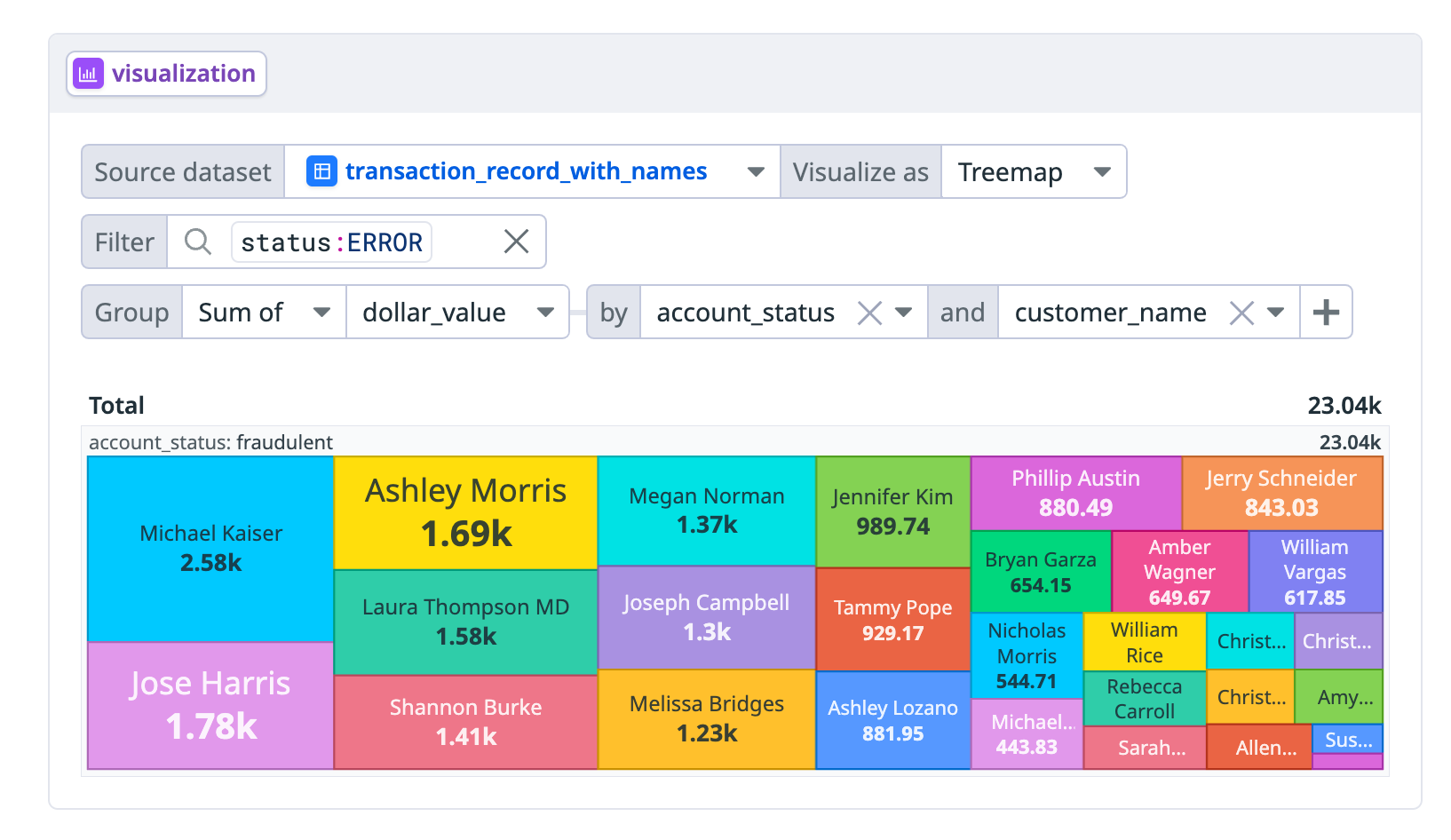

Visualization cell

Add the Visualization cell to display your data as a:

- Table

- Top list

- Timeseries

- Treemap

- Pie chart

- Scatterplot

- Click the Visualization tile.

- Select the data source you want to visualize in the Source dataset dropdown menu.

- Select your visualization method in the Visualize as dropdown menu.

- Enter a filter if you want to filter to a subset of the data. For example,

status:error. If you are using an analysis cell as your data source, you can also filter the data in SQL first. - If you want to group your data, click Add Aggregation and select the information you want to group by.

- Click the download button to export the data as a CSV.

Transformation cell

Click the Transformation tile to add a cell for filtering, aggregating, and extracting data.

- Click the Transformation tile.

- Select the data source you want to transform in the Source dataset dropdown menu.

- Click the plus icon to add a Filter, Parse, or Aggregate function.

- For Filter, add a filter query for the dataset.

- For Parse, enter grok syntax to extract data into a separate column. In the from dropdown menu, select the column the data is getting extracted from. See the column extraction example.

- For Aggregate, select what you want to group the data by in the dropdown menus.

- For Limit, enter the number of rows of the dataset you want to display.

- Click the download icon to export the dataset into a CSV.

Column extraction example

The following is an example dataset:

| timestamp | host | message |

|---|---|---|

| May 29 11:09:28.000 | shopist.internal | Submitted order for customer 21392 |

| May 29 10:59:29.000 | shopist.internal | Submitted order for customer 38554 |

| May 29 10:58:54.000 | shopist.internal | Submitted order for customer 32200 |

Use the following grok syntax to extract the customer ID from the message and add it to a new column called customer_id:

Submitted order for customer %{notSpace:customer_id}`

This is the resulting dataset in the transformation cell after the extraction:

| timestamp | host | message | customer_id |

|---|---|---|---|

| May 29 11:09:28.000 | shopist.internal | Submitted order for customer 21392 | 21392 |

| May 29 10:59:29.000 | shopist.internal | Submitted order for customer 38554 | 38554 |

| May 29 10:58:54.000 | shopist.internal | Submitted order for customer 32200 | 32200 |

Text cell

Click the Text cell to add a markdown cell so you can add information and notes.

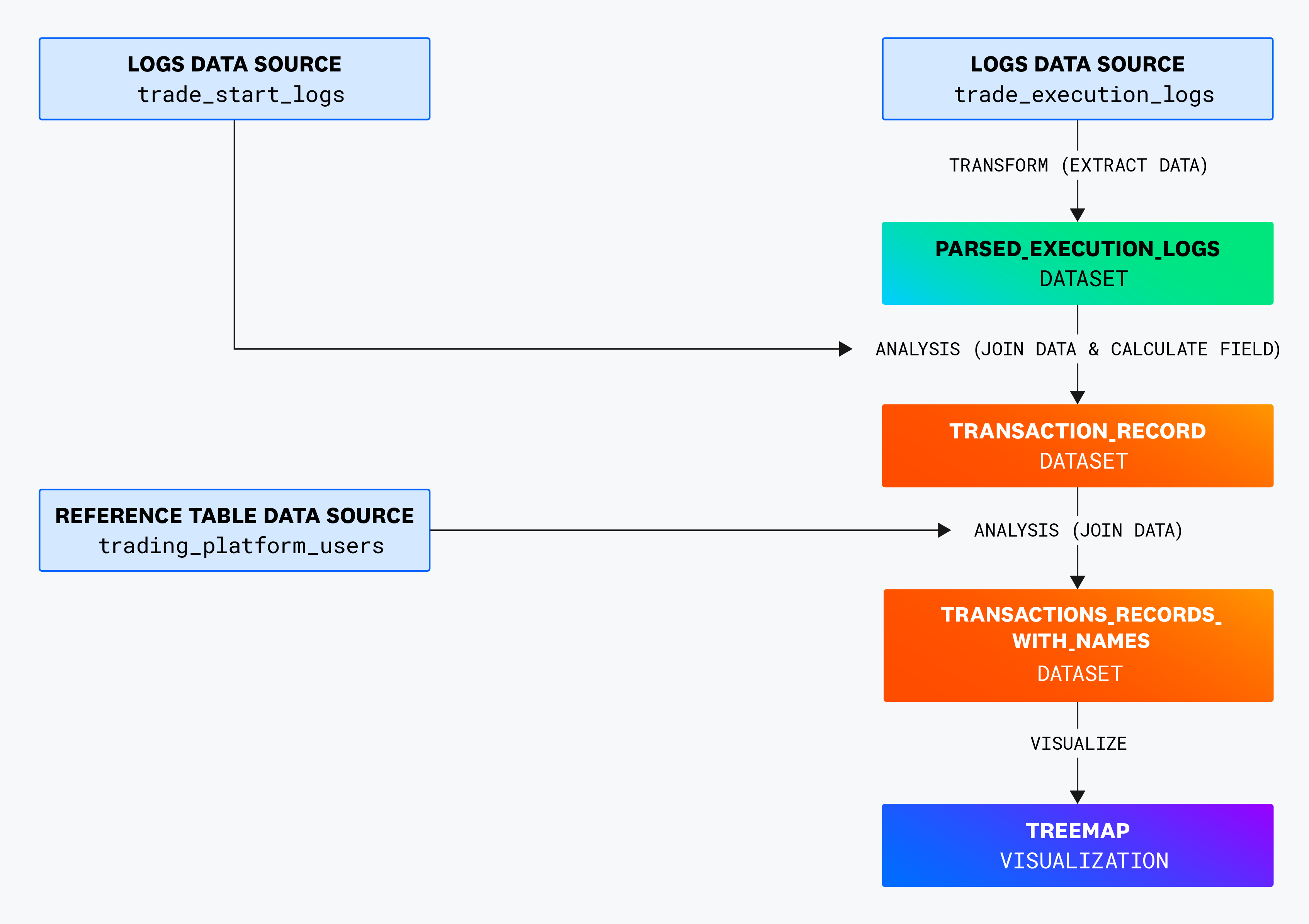

An example workspace

This example workspace has:

Three data sources:

trade_start_logstrade_execution_logstrading_platform_users

Three derived datasets, which are the results of data that has been transformed from filtering, grouping, or querying using SQL:

parsed_execution_logstransaction_recordtransaction_record_with_names

One treemap visualization.

This diagram shows the different transformation and analysis cells the data sources go through.

Example walkthrough

The example starts off with two logs data sources:

trade_start_logstrade_execution_logs

The next cell in the workspace is the transform cell parsed_execution_logs. It uses the following grok parsing syntax to extract the transaction ID from the message column of the trade_execution_logs dataset and adds the transaction ID to a new column called transaction_id.

transaction %{notSpace:transaction_id}

An example of the resulting parsed_execution_logs dataset:

| timestamp | host | message | transaction_id |

|---|---|---|---|

| May 29 11:09:28.000 | shopist.internal | Executing trade for transaction 56519 | 56519 |

| May 29 10:59:29.000 | shopist.internal | Executing trade for transaction 23269 | 23269 |

| May 29 10:58:54.000 | shopist.internal | Executing trade for transaction 96870 | 96870 |

| May 31 12:20:01.152 | shopist.internal | Executing trade for transaction 80207 | 80207 |

The analysis cell transaction_record uses the following SQL command to select specific columns from the trade_start_logs dataset and the trade_execution_logs, renames the status INFO to OK, and then joins the two datasets.

SELECT

start_logs.timestamp,

start_logs.customer_id,

start_logs.transaction_id,

start_logs.dollar_value,

CASE

WHEN executed_logs.status = 'INFO' THEN 'OK'

ELSE executed_logs.status

END AS status

FROM

trade_start_logs AS start_logs

JOIN

trade_execution_logs AS executed_logs

ON

start_logs.transaction_id = executed_logs.transaction_id;

An example of the resulting transaction_record dataset:

| timestamp | customer_id | transaction_id | dollar_value | status |

|---|---|---|---|---|

| May 29 11:09:28.000 | 92446 | 085cc56c-a54f | 838.32 | OK |

| May 29 10:59:29.000 | 78037 | b1fad476-fd4f | 479.96 | OK |

| May 29 10:58:54.000 | 47694 | cb23d1a7-c0cb | 703.71 | OK |

| May 31 12:20:01.152 | 80207 | 2c75b835-4194 | 386.21 | ERROR |

Then the reference table trading_platform_users is added as a data source:

| customer_name | customer_id | account_status |

|---|---|---|

| Meghan Key | 92446 | verified |

| Anthony Gill | 78037 | verified |

| Tanya Mejia | 47694 | verified |

| Michael Kaiser | 80207 | fraudulent |

The analysis cell transaction_record_with_names runs the following SQL command to take the customer name and account status from trading_platform_users, appending it as columns, and then joins it with the transaction_records dataset:

SELECT tr.timestamp, tr.customer_id, tpu.customer_name, tpu.account_status, tr.transaction_id, tr.dollar_value, tr.status

FROM transaction_record AS tr

LEFT JOIN trading_platform_users AS tpu ON tr.customer_id = tpu.customer_id;

An example of the resulting transaction_record_with_names dataset:

| timestamp | customer_id | customer_name | account_status | transaction_id | dollar_value | status |

|---|---|---|---|---|---|---|

| May 29 11:09:28.000 | 92446 | Meghan Key | verified | 085cc56c-a54f | 838.32 | OK |

| May 29 10:59:29.000 | 78037 | Anthony Gill | verified | b1fad476-fd4f | 479.96 | OK |

| May 29 10:58:54.000 | 47694 | Tanya Mejia | verified | cb23d1a7-c0cb | 703.71 | OK |

| May 31 12:20:01.152 | 80207 | Michael Kaiser | fraudulent | 2c75b835-4194 | 386.21 | ERROR |

Finally, a treemap visualization cell is created with the transaction_record_with_names dataset filtered for status:error logs and grouped by dollar_value, account_status, and customer_name.

Further reading

Más enlaces, artículos y documentación útiles: